Certified Tester – Testing with Generative AI (CT-GenAI)

The Certified Tester – Testing with Generative AI (CT-GenAI) certification is an advanced qualification offered under the ISTQB certification scheme. It builds upon the Foundation Level and is designed to equip testing and quality-engineering professionals with a structured and practical understanding of how generative AI—particularly Large Language Models (LLMs)—can be applied across the complete software testing lifecycle. This certification focuses on how, when, and why to use generative AI in testing activities, covering areas such as requirements analysis, test design, test automation, defect reporting, and continuous quality improvement.

Purpose and Value of the Certification

CT-GenAI goes beyond theoretical knowledge by emphasizing hands-on, real-world application of generative AI in testing. Candidates gain practical skills through proven prompt-engineering techniques and applied testing use cases that reflect modern industry challenges. In addition to leveraging the benefits of GenAI, the certification strongly emphasizes responsible and ethical usage, helping professionals identify, manage, and mitigate key risks such as:

- AI hallucinations and unreliable outputs

- Bias and fairness issues

- Security and data privacy concerns

- Environmental and sustainability impacts

Target Audience

The CT-GenAI qualification is suitable for professionals who are directly or indirectly involved in software testing and quality assurance using generative AI technologies, including:

- Software testers and test analysts

- Test automation engineers

- Test managers and quality engineers

- User acceptance testers (UAT)

- Software developers working with AI-assisted testing

It is also highly valuable for professionals who require a solid conceptual understanding of generative AI in testing contexts, such as:

- Project and program managers

- Quality and process managers

- Software development managers

- Business analysts

- IT leaders and consultants

Prerequisites

Before attempting the CT-GenAI exam, candidates must hold the ISTQB® Certified Tester Foundation Level (CTFL) certification. This prerequisite ensures that all candidates have a strong grounding in core software testing principles.

Position Within the ISTQB Certification Scheme

The ISTQB certification framework supports professionals at every stage of their testing careers by offering both broad foundational knowledge and deep specialization. Professionals who achieve the CT-GenAI certification may choose to further advance their careers by pursuing:

- Core Advanced Level certifications (Test Analyst, Technical Test Analyst, Test Manager, Test Engineering)

- Expert Level certifications, such as Test Management or Improving the Test Process

CT-GenAI serves as a strong bridge between foundational testing knowledge and advanced, future-focused testing competencies.

Business Outcomes and Learning Objectives

A professional who successfully earns the CT-GenAI certification will be able to:

- Understand the core concepts, strengths, and limitations of generative AI technologies

- Apply effective prompt-engineering techniques for software testing tasks

- Identify risks associated with using generative AI in testing and implement appropriate mitigation strategies

- Recognize practical applications of generative AI solutions across different testing activities

- Contribute meaningfully to defining and implementing an organizational generative AI testing strategy and roadmap

Career Progression and Next Steps

After earning the CT-GenAI certification, professionals can continue progressing within the ISTQB certification pathway by pursuing:

- Core Advanced Level modules

- Expert Level certifications for leadership and process excellence roles

Exam Details

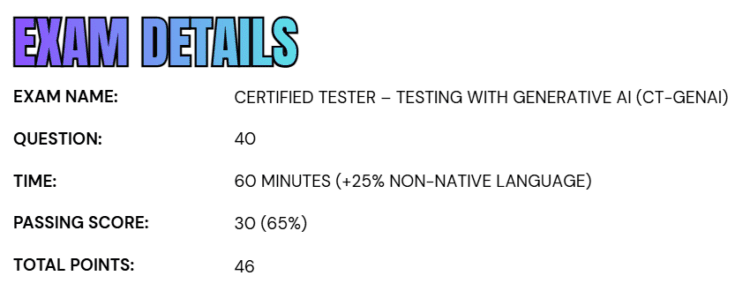

- The Certified Tester – Testing with Generative AI (CT-GenAI) exam consists of 40 questions designed to evaluate a candidate’s understanding of generative AI concepts and their practical application in software testing.

- The exam carries a total of 46 points, and candidates must score at least 30 points, which corresponds to a 65% passing score, to successfully achieve certification.

- The total exam duration is 60 minutes. Candidates who are taking the exam in a non-native language are granted an additional 25% extra time, ensuring a fair and accessible testing experience for international participants.

Course Outline

The Certified Tester – Testing with Generative AI (CT-GenAI) exam covers the following topics:

1. Overview of Generative AI for Software Testing – 100 minutes

1.1 Generative AI Foundations and Key Concepts

- (K1) Recalling different types of AI: symbolic AI, classical machine learning, deep learning, and generative AI

- (K2) Explaining the basics of generative AI and large language models

- (H1) Practice tokenization and token count evaluation when using an LLM for a software test task

- (K2) Distinguishing between foundation, instruction-tuned and reasoning LLMs

- (K2) Summarizing the basic principles of multimodal LLMs and vision-language models

- (H1) Writing and executing a prompt for a multimodal LLM using both textual and image inputs for a software test task

1.2 Leveraging Generative AI in Software Testing: Core Principles

- (K2) Give examples of key LLM capabilities for test tasks

- (K2) Comparing interaction models when using GenAI for software testing

2. Understand Prompt Engineering for Effective Software Testing – 365 minutes

2.1 Effective Prompt Development

- (K2) Give examples of the structure of prompts used in generative AI for software testing

- (H0) Observing several given prompts for software test tasks, identifying the components of role, context, instruction, input data, constraints and output format in each

- (K2) Differentiate core prompting techniques for software testing

- (H0) Observing demonstrations of prompt chaining, few-shot prompting, and meta prompting applied to software test tasks

- (H1) Identifying which prompt engineering techniques are being used in given examples

- (K2) Distinguishing between system prompts and user prompts

2.2 Applying Prompt Engineering Techniques to Software Test tasks

- (K3) Applying generative AI to test analysis tasks

- (H2) Practicing multimodal prompting to generate acceptance criteria for a user story based on a GUI wireframe

- (H2) Practice prompt chaining and human verification to progressively analyze a given user story and refine acceptance criteria

- (K3) Applying generative AI to test design and test implementation tasks

- (H2) Practice functional test case generation from user stories with generative AI using prompt chaining, structured prompts and meta-prompting

- (H2) Using few-shot prompting technique to generate Gherkin style test conditions and test cases from user stories

- (H2) Using prompt chaining to prioritize test cases within a given test suite, taking into account their specific priorities and dependencies

- (K3) Apply generative AI to automated regression testing

- (H2) Practicing few-shot prompting to create and manage keyword-driven test scripts

- (H2) Practice structured prompt engineering for test report analysis

- (K3) Applying generative AI to test control and monitoring tasks

- (H0) Observing test monitoring metrics prepared by generative AI from test data

- (K3) Select and apply appropriate prompting techniques for a given context and test task

- (H1) Select and apply context-appropriate prompting techniques for a given test task

2.3 Evaluate Generative AI Results and Refine Prompts for Software Test Tasks

- (K2) Understanding the metrics for evaluating the results of generative AI on test tasks

- (H0) Observing how metrics can be used for evaluating the result of generative AI on a test task

- (K2) Giving examples of techniques for evaluating and iteratively refining prompts

- (H1) Evaluating and optimizing a prompt for a given test task

3. Learn about Managing Risks of Generative AI in Software Testing – 160 minutes

3.1 Hallucinations, Reasoning Errors and Biases

- (K1) Recalling the definitions of hallucinations, reasoning errors and biases in Generative AI systems

- (K3) Identifying hallucinations, reasoning errors and biases in LLM output

- (H1) Experimenting with hallucinations in testing with GenAI

- (H1) Experimenting with reasoning errors in testing with GenAI

- (K2) Summarizing mitigation techniques for GenAI hallucinations, reasoning errors and biases in software test tasks

- (K1) Recalling mitigation techniques for non-deterministic behavior of LLMs

3.2 Data Privacy and Security Risks of Generative AI in Software Testing

- (K2) Explaining key data privacy and security risks associated with using generative AI in software testing

- (K2) Give examples of data privacy and vulnerabilities in using Generative AI in software testing

- (K2) Summarizing mitigation strategies to protect data privacy and enhance security in Generative AI for software testing

- (H0) Recognizing data privacy and security risks in a given Generative AI for testing case study

3.3 Energy Consumption and Environmental Impact of Generative AI for Software Testing

- (K2) Explaining the impact of task characteristics and model usage on the energy consumption of Generative AI in software testing

- (H1) Using a simulator to calculate the energy and CO₂ emissions for given test tasks with Generative AI

3.4 AI Regulations, Standards and Best Practice Frameworks

- (K1) Recalling examples of AI regulations, standards and best practice frameworks relevant to Generative AI in software testing

4. Learn about LLM-Powered Test Infrastructure for Software Testing – 110 minutes

4.1 Architectural Approaches for LLM-Powered Test Infrastructure

- (K2) Explaining key architectural components and concepts of LLM-powered test infrastructure

- (K2) Summarizing Retrieval-Augmented Generation

- (H1) Experimenting with Retrieval-Augmented Generation for a given test task

- (K2) Explaining the role and application of LLM-powered agents in automating test processes

- (H0) Observing how an LLM-powered agent assists in automating a repetitive test task

4.2 Fine-Tuning and LLMOps: Operationalizing Generative AI for Software Testing

- (K2) Explaining the fine-tuning of language models for specific test tasks

- (H0) Observing an example of a fine-tuning process for a given test task and language model

- (K2) Explaining LLMOps and its role in deploying and managing LLMs for test tasks

5. Deploying and Integrating Generative AI in Test organizations – 80 minutes

5.1 Roadmap for Adoption of Generative AI in Software Testing

- (K1) Recalling the risks of shadow AI

- (K2) Explaining the key aspects to consider when defining a Generative AI strategy for software testing

- (K2) Summarizing key criteria for selecting LLMs/SLMs for software test tasks in a given context

- (H1) Estimating the recurring costs of using Generative AI for a given test task

- (K1) Recalling key phases in the adoption of Generative AI in a test organization

5.2 Manage Change when Adopting Generative AI for Software Testing

- (K2) Explaining the essential skills and knowledge areas required for testers to work effectively with generative AI in test processes

- (K1) Recalling strategies for cultivating AI skills within test teams to support the adoption of Generative AI in test activities

- (K1) Recognizing how test processes and responsibilities shift within a test organization when adopting Generative AI

Certified Tester – Testing with Generative AI (CT-GenAI) Exam FAQs

Certified Tester – Testing with Generative AI (CT-GenAI) Exam Study Guide

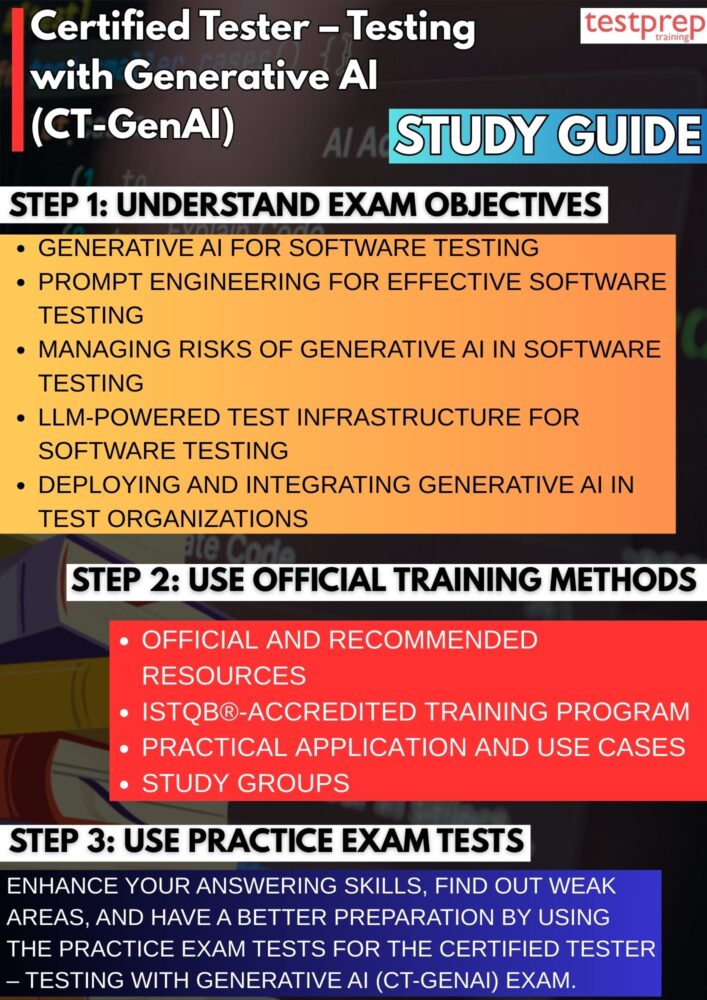

Step 1: Thoroughly Understand the Exam Scope, Structure, and Objectives

Begin by carefully reviewing the official CT-GenAI syllabus to gain a complete understanding of what the exam evaluates. Analyze the defined learning objectives to understand the expected depth of knowledge, including theoretical understanding and applied skills. Pay attention to how generative AI concepts are integrated across the testing lifecycle, such as requirements analysis, test design, execution, automation, reporting, and continuous improvement. Understanding topic weightage and learning levels will help you focus your preparation on areas that contribute most to the final score and reduce the risk of overlooking critical topics.

Step 2: Enroll in an ISTQB®-Accredited Training Program

Training delivered by ISTQB–accredited Training Providers offers structured learning aligned directly with the CT-GenAI syllabus. These programs are available in classroom-based, virtual instructor-led, and self-paced e-learning formats, allowing flexibility based on your schedule and learning preferences. Accredited courses typically include expert instruction, real-world examples, hands-on exercises, and exam-focused explanations that simplify complex generative AI concepts and clarify how they are assessed in the exam.

Step 3: Conduct In-Depth Self-Study Using Official and Recommended Resources

Self-study is essential for mastering CT-GenAI concepts. Use the official syllabus as your primary reference and supplement it with recommended reading materials. Focus on understanding generative AI fundamentals, the working principles of Large Language Models (LLMs), and their practical application in software testing. Pay special attention to responsible AI usage, including ethical considerations, risk identification, and mitigation strategies related to hallucinations, bias, data privacy, security, and environmental impact. Summarizing key points in your own words can significantly improve comprehension and long-term retention.

Step 4: Strengthen Understanding Through Practical Application and Use Cases

Apply theoretical knowledge to practical scenarios to reinforce learning. Practice using generative AI tools to simulate common testing activities such as generating test cases, refining acceptance criteria, supporting test automation, analyzing defects, and improving test documentation. Experimenting with prompt-engineering techniques will help you understand how to guide AI outputs effectively and recognize their limitations. This hands-on approach is particularly valuable for answering application-based and scenario-driven exam questions.

Step 5: Collaborate Through Study Groups and Professional Communities

Engaging with study groups and software testing communities can significantly enhance your preparation experience. Peer discussions allow you to share insights, compare interpretations of syllabus topics, and learn from real-world experiences of others working with generative AI in testing. Community participation also helps clarify doubts more quickly, exposes you to alternative perspectives, and keeps you motivated throughout your preparation journey.

Step 6: Regularly Practice with Sample Questions and Mock Exams

Practice tests play a crucial role in assessing exam readiness. Attempt mock exams and sample questions under timed conditions to simulate the real exam environment. Analyze your performance after each attempt, focusing on why certain answers are correct or incorrect. This process helps you identify weak areas, improve accuracy, and develop effective time-management strategies. Consistent practice builds confidence and reduces exam-day anxiety.

Step 7: Perform Extensive Revision and Final Readiness Assessment

In the final phase of preparation, revisit all key topics outlined in the syllabus, with special focus on definitions, core concepts, risks, and mitigation approaches. Review your notes, summaries, and practice test analyses to ensure conceptual clarity and consistency. Conduct a final self-assessment to confirm readiness, address any remaining gaps, and reinforce critical areas. Adequate rest and a calm mindset before the exam will further enhance performance.