AWS Certified Generative AI Developer – Professional

The AWS Certified Generative AI Developer – Professional certification is designed for developers who want to demonstrate advanced expertise in building and deploying real-world generative AI applications on AWS. It focuses on moving beyond experimentation into production-ready systems that are scalable, secure, and aligned with business goals.

This certification is especially valuable for professionals with hands-on cloud experience who are ready to take on complex AI-driven workloads. For organizations, it serves as a benchmark to identify developers capable of delivering robust generative AI solutions that create measurable impact while maintaining performance and cost efficiency.

Furthermore, the AWS Certified Generative AI Developer – Professional (AIP-C01) exam evaluates the ability to design, implement, and manage generative AI applications using AWS technologies. It is tailored for individuals working in a GenAI developer role and focuses on practical, real-world application of concepts rather than theoretical understanding alone. Candidates are assessed on their ability to integrate foundation models into applications and workflows, ensuring solutions are production-ready and aligned with modern architectural standards.

Key Skills Validated

This certification confirms a candidate’s ability to handle critical aspects of generative AI development, including:

- Advanced Solution Design

- Building architectures that incorporate vector databases, retrieval-augmented generation (RAG), and knowledge-based systems

- Designing scalable and efficient GenAI pipelines

- Application Integration

- Embedding foundation models into applications and enterprise workflows

- Connecting AI capabilities with existing systems to enhance business processes

- Prompt Engineering and AI Interaction

- Crafting and managing prompts for optimal model performance

- Controlling outputs to ensure consistency and relevance

- Agent-Based AI Systems

- Developing intelligent agents capable of decision-making and task execution

- Automating workflows using agentic AI approaches

- Performance and Cost Optimization

- Balancing computational efficiency with output quality

- Optimizing resource usage to reduce operational costs

- Security and Responsible AI

- Implementing secure architectures and access controls

- Applying governance frameworks and responsible AI practices to ensure ethical use

- Monitoring and Troubleshooting

- Tracking system performance using observability tools

- Identifying and resolving issues in AI pipelines

- Model Evaluation

- Assessing foundation models for accuracy, reliability, and fairness

- Selecting the most appropriate models for specific use cases

Ideal Candidate Profile

This certification is intended for professionals who:

- Have at least two years of experience developing applications on cloud platforms or with modern frameworks

- Possess a solid understanding of AI/ML concepts or data engineering practices

- Have approximately one year of hands-on experience working with generative AI solutions

Candidates should be comfortable working with production environments and capable of translating business requirements into technical implementations.

Recommended AWS Knowledge

To succeed in the exam, candidates should be familiar with core AWS concepts and services, including:

- Compute, storage, and networking fundamentals within AWS

- Security principles such as identity and access management

- Deployment strategies and infrastructure as code (IaC) tools

- Monitoring, logging, and observability practices

- Cost management and optimization techniques

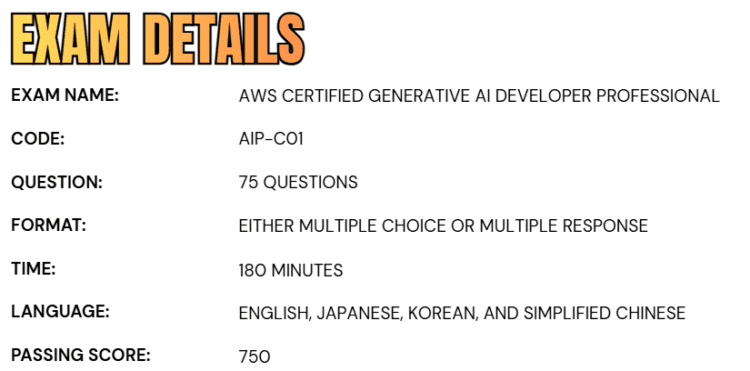

Exam Details

- The AWS Certified Generative AI Developer – Professional (AIP-C01) is a professional-level certification exam designed to assess advanced skills in building and deploying generative AI solutions on AWS. As a professional category exam, it is structured to evaluate both technical depth and practical application in real-world scenarios.

- The exam has a total duration of 180 minutes, giving candidates sufficient time to carefully analyze and respond to each question.

- It consists of 75 questions presented in a combination of multiple-choice and multiple-response formats.

- Candidates can choose to take the exam either at an authorized Pearson VUE testing center or through an online proctored environment, offering flexibility based on individual preference.

- The exam is available in multiple languages, including English, Japanese, Korean, and Simplified Chinese, making it accessible to a global audience.

- The question formats are designed to test different levels of understanding. Multiple-choice questions require selecting one correct answer from four options, while multiple-response questions involve identifying two or more correct answers from a larger set of choices.

- It is important to note that full credit for multiple-response questions is awarded only when all correct options are selected.

- From a scoring perspective, unanswered questions are treated as incorrect, and there is no negative marking for incorrect answers, which encourages candidates to attempt every question.

- Out of the total questions, 65 are scored, while the remaining are unscored and used for evaluation purposes. To successfully pass the exam, candidates must achieve a minimum score of 750, reflecting a strong command of the required skills and knowledge.

Course Outline

The AWS Certified Generative AI Developer – Professional (AIP-C01) exam covers the following topics:

Domain 1: Understand the Foundation Model Integration, Data Management, and Compliance

Task 1.1: Analyze requirements and design GenAI solutions.

- Skill 1.1.1: Create comprehensive architectural designs that align with specific business needs and technical constraints (for example, by using appropriate FMs, integration patterns, deployment strategies).

- Skill 1.1.2: Develop technical proof-of-concept implementations to validate feasibility, performance characteristics, and business value before proceeding to full-scale deployment (for example, by using Amazon Bedrock). (AWS Documentation: Amazon Bedrock)

- Skill 1.1.3: Create standardized technical components to ensure consistent implementation across multiple deployment scenarios (for example, by using the AWS Well-Architected Framework, AWS WA Tool Generative AI Lens). (AWS Documentation: Generative AI Lens – AWS Well-Architected Framework)

Task 1.2: Select and configure FMs.

- Skill 1.2.1: Assess and choose FMs to ensure optimal alignment with specific business use cases and technical requirements (for example, by using performance benchmarks, capability analysis, limitation evaluation).

- Skill 1.2.2: Create flexible architecture patterns to enable dynamic model selection and provider switching without requiring code modifications (for example, by using AWS Lambda, Amazon API Gateway, AWS AppConfig). (AWS Documentation: What is AWS Lambda?, AWS AppConfig, Integrating microservices by using AWS serverless services)

- Skill 1.2.3: Design resilient AI systems to ensure continuous operation during service disruptions (for example, by using AWS Step Functions circuit breaker patterns, Amazon Bedrock Cross-Region Inference for models that have limited regional availability, cross-Region model deployment, graceful degradation strategies). (AWS Documentation: AWS Step Functions – Error Handling and Retry Patterns, Amazon Bedrock Cross-Region Inference, Amazon Bedrock Quotas and Endpoints (Regional Availability), AWS Well-Architected Framework – Reliability Pillar)

- Skill 1.2.4: Implement FM customization deployment and lifecycle management (for example, by using Amazon SageMaker AI to deploy domain-specific fine-tuned models, parameter-efficient adaptation techniques such as low-rank adaptation [LoRA] and adapters for model deployment, SageMaker Model Registry for versioning and to deploy customized models, automated deployment pipelines to update models, rollback strategies for failed deployments, lifecycle management to retire and replace models). (AWS Documentation: Amazon SageMaker Model Deployment, Fine-Tuning Models in Amazon SageMaker, SageMaker Model Registry, SageMaker Pipelines for CI/CD, Deployment Guardrails and Rollback Strategies, Model Monitor and Lifecycle Management)

Task 1.3: Implement data validation and processing pipelines for FM consumption.

- Skill 1.3.1: Create comprehensive data validation workflows to ensure data meets quality standards for FM consumption (for example, by using AWS Glue Data Quality, SageMaker Data Wrangler, custom Lambda functions, Amazon CloudWatch metrics). (AWS Documentation: AWS Glue Data Quality, Amazon SageMaker Data Wrangler, AWS Lambda Developer Guide, Amazon CloudWatch Metrics and Monitoring)

- Skill 1.3.2: Create data processing workflows to handle complex data types including text, image, audio, and tabular data with specialized processing requirements for FM consumption (for example, by using Amazon Bedrock multimodal models, SageMaker Processing, AWS Transcribe, advanced multimodal pipeline architectures). (AWS Documentation: Amazon SageMaker Processing, Amazon Transcribe Developer Guide)

- Skill 1.3.3: Format input data for FM inference according to model-specific requirements (for example, by using JSON formatting for Amazon Bedrock API requests, structured data preparation for SageMaker AI endpoints, conversation formatting for dialog-based applications). (AWS Documentation: Amazon Bedrock Runtime API Reference, SageMaker Invoke Endpoint (Inference Request Format))

- Skill 1.3.4: Enhance input data quality to improve FM response quality and consistency (for example, by using Amazon Bedrock to reformat text, Amazon Comprehend to extract entities, Lambda functions to normalize data). (AWS Documentation: Amazon Comprehend Developer Guide, AWS Lambda Developer Guide, Amazon Bedrock Runtime API (Text Processing and Transformation))

Task 1.4: Design and implement vector store solutions.

- Skill 1.4.1: Create advanced vector database architectures specifically for FM augmentation to enable efficient semantic retrieval beyond traditional search capabilities (for example, by using Amazon Bedrock Knowledge Bases for hierarchical organization, Amazon OpenSearch Service with the Neural plugin for Amazon Bedrock integration for topic-based segmentation, Amazon RDS with Amazon S3 document repositories, Amazon DynamoDB with vector databases for metadata and embeddings). (AWS Documentation: Amazon Bedrock Knowledge Bases, Amazon OpenSearch Service – Vector Search and k-NN, Using Amazon RDS with Amazon S3 for Data Storage, Amazon DynamoDB Developer Guide)

- Skill 1.4.2: Develop comprehensive metadata frameworks to improve search precision and context awareness for FM interactions (for example, by using S3 object metadata for document timestamps, custom attributes for authorship information, tagging systems for domain classification). (AWS Documentation: Using Metadata with Amazon S3 Objects, Object Tagging in Amazon S3, Amazon DynamoDB Data Modeling (for Metadata Storage), Amazon OpenSearch Service – Index Mapping and Fields)

- Skill 1.4.3: Implement high-performance vector database architectures to optimize semantic search performance at scale for FM retrieval (for example, by using OpenSearch sharding strategies, multi-index approaches for specialized domains, hierarchical indexing techniques). (AWS Documentation: Amazon OpenSearch Service – Shards and Scaling, Amazon OpenSearch Service – Index Management, Amazon OpenSearch Service – Performance Tuning)

- Skill 1.4.4: Use AWS services to create integration components to connect with resources (for example, document management systems, knowledge bases, internal wikis for comprehensive data integration in GenAI applications). (AWS Documentation: Amazon Bedrock Knowledge Bases, AWS Lambda Developer Guide, Amazon API Gateway Developer Guide, AWS Step Functions Developer Guide, AWS AppFlow (SaaS and Data Source Integration))

- Skill 1.4.5: Design and deploy data maintenance systems to ensure that vector stores contain current and accurate information for FM augmentation (for example, by using incremental update mechanisms, real-time change detection systems, automated synchronization workflows, scheduled refresh pipelines). (AWS Documentation: Amazon OpenSearch Service – Index Refresh and Data Updates, AWS Glue ETL for Incremental Data Processing, AWS Step Functions for Orchestrating Data Pipelines)

Task 1.5: Design retrieval mechanisms for FM augmentation.

- Skill 1.5.1: Develop effective document segmentation approaches to optimize retrieval performance for FM context augmentation (for example, by using Amazon Bedrock chunking capabilities, Lambda functions to implement fixed-size chunking, custom processing for hierarchical chunking based on content structure). (AWS Documentation: Amazon Bedrock Knowledge Bases – Document Chunking, AWS Lambda Developer Guide, Amazon OpenSearch Service – Indexing and Text Analysis)

- Skill 1.5.2: Select and configure optimal embedding solutions to create efficient vector representations for semantic search (for example, by using Amazon Titan embeddings based on dimensionality and domain fit, by evaluating performance characteristics of Amazon Bedrock embedding models, by using Lambda functions to batch generate embeddings). (AWS Documentation: Model Evaluation in Amazon Bedrock, AWS Lambda Developer Guide)

- Skill 1.5.3: Deploy and configure vector search solutions to enable semantic search capabilities for FM augmentation (for example, by using OpenSearch Service with vector search capabilities, Amazon Aurora with the pgvector extension, Amazon Bedrock Knowledge Bases with managed vector store functionality). (AWS Documentation: Amazon OpenSearch Service – Vector Search, Amazon Aurora PostgreSQL – pgvector Extension, Amazon Bedrock Knowledge Bases)

- Skill 1.5.4: Create advanced search architectures to improve the relevance and accuracy of retrieved information for FM context (for example, by using OpenSearch for semantic search, hybrid search that combines keywords and vectors, Amazon Bedrock reranker models). (AWS Documentation: Amazon OpenSearch Service – Vector and Hybrid Search, Amazon Bedrock Reranking Models)

- Skill 1.5.5: Develop sophisticated query handling systems to improve the retrieval effectiveness and result quality for FM augmentation (for example, by using Amazon Bedrock for query expansion, Lambda functions for query decomposition, Step Functions for query transformation). (AWS Documentation: AWS Lambda Developer Guide, AWS Step Functions Developer Guide)

- Skill 1.5.6: Create consistent access mechanisms to enable seamless integration with FMs (for example, by using function calling interfaces for vector search, Model Context Protocol [MCP] clients for vector queries, standardized API patterns for retrieval augmentation). (AWS Documentation: Amazon Bedrock Agents and Function Calling, Amazon API Gateway Developer Guide, AWS Lambda Developer Guide)

Task 1.6: Implement prompt engineering strategies and governance for FM interactions.

- Skill 1.6.1: Create effective model instruction frameworks to control FM behavior and outputs (for example, by using Amazon Bedrock Prompt Management to enforce role definitions, Amazon Bedrock Guardrails to enforce responsible AI guidelines, template configurations to format responses) (AWS Documentation: Amazon Bedrock Prompt Management, Amazon Bedrock Guardrails)

- Skill 1.6.2: Build interactive AI systems to maintain context and improve user interactions with FMs (for example, by using Step Functions for clarification workflows, Amazon Comprehend for intent recognition, DynamoDB for conversation history storage). (AWS Documentation: AWS Step Functions Developer Guide, Amazon Comprehend Developer Guide, Amazon DynamoDB Developer Guide)

- Skill 1.6.3: Implement comprehensive prompt management and governance systems to ensure consistency and oversight of FM operations (for example, by using Amazon Bedrock Prompt Management to create parameterized templates and approval workflows, Amazon S3 to store template repositories, AWS CloudTrail to track usage, Amazon CloudWatch Logs to log access). (AWS Documentation: Amazon Bedrock Prompt Management, AWS CloudTrail User Guide, Amazon CloudWatch Logs)

- Skill 1.6.4: Develop quality assurance systems to ensure prompt effectiveness and reliability for FMs (for example, by using Lambda functions to verify expected output, Step Functions to test edge cases, CloudWatch to test prompt regression). (AWS Documentation: AWS Lambda Developer Guide, AWS Step Functions Developer Guide, Amazon CloudWatch Monitoring and Observability)

- Skill 1.6.5: Enhance FM performance to refine prompts iteratively and improve response quality beyond basic prompting techniques (for example, by using structured input components, output format specifications, chain-of-thought instruction patterns, feedback loops).

- Skill 1.6.6: Design complex prompt systems to handle sophisticated tasks with FMs (for example, by using Amazon Bedrock Prompt Flows for sequential prompt chains, conditional branching based on model responses, reusable prompt components, integrated pre-processing and post-processing steps).

Domain 2: Learn about Implementation and Integration

Task 2.1: Implement agentic AI solutions and tool integrations.

- Skill 2.1.1: Develop intelligent autonomous systems with appropriate memory and state management capabilities (for example, by using Strands Agents and AWS Agent Squad for multi-agent systems, MCP for agent-tool interactions). (AWS Documentation: Amazon Bedrock Agents, AWS Step Functions Developer Guide, Amazon DynamoDB Developer Guide)

- Skill 2.1.2: Create advanced problem-solving systems to give FMs the ability to break down and solve complex problems by following structured reasoning steps (for example, by using Step Functions to implement ReAct patterns and chain-of-thought reasoning approaches).

- Skill 2.1.3: Develop safeguarded AI workflows to ensure controlled FM behavior (for example, by using Step Functions to implement stopping conditions, Lambda functions to implement timeout mechanisms, IAM policies to enforce resource boundaries, circuit breakers to mitigate failures). (AWS Documentation: AWS Step Functions – Error Handling and Retry Patterns, AWS Lambda – Function Timeout Configuration, AWS IAM Policies and Permissions)

- Skill 2.1.4: Create sophisticated model coordination systems to optimize performance across multiple capabilities (for example, by using specialized FMs to perform complex tasks, custom aggregation logic for model ensembles, model selection frameworks). (AWS Documentation: Amazon Bedrock – Use Multiple Foundation Models, Amazon Bedrock Agents)

- Skill 2.1.5: Develop collaborative AI systems to enhance FM capabilities with human expertise (for example, by using Step Functions to orchestrate review and approval processes, API Gateway to implement feedback collection mechanisms, human augmentation patterns). (AWS Documentation: AWS Step Functions – Human Approval Workflows, Amazon API Gateway Developer Guide, Human-in-the-Loop Workflows with Amazon Augmented AI (A2I))

- Skill 2.1.6: Implement intelligent tool integrations to extend FM capabilities and to ensure reliable tool operations (for example, by using the Strands API to implement custom behaviors, standardized function definitions, Lambda functions to implement error handling and parameter validation). (AWS Documentation: Amazon Bedrock Agents – Tool Use and Function Calling. AWS Lambda Developer Guide, AWS Step Functions – Service Integrations)

- Skill 2.1.7: Develop model extension frameworks to enhance FM capabilities (for example, by using Lambda functions to implement stateless MCP servers that provide lightweight tool access, Amazon ECS to implement MCP servers that provide complex tools, MCP client libraries to ensure consistent access patterns). (AWS Documentation: Amazon Bedrock Agents – Extend Models with Tools, AWS Lambda Developer Guide, Amazon ECS Developer Guide)

Task 2.2: Implement model deployment strategies.

- Skill 2.2.1: Deploy FMs based on specific application needs and performance requirements (for example, by using Lambda functions for on-demand invocation, Amazon Bedrock provisioned throughput configurations, SageMaker AI endpoints to implement hybrid solutions).

- Skill 2.2.2: Deploy FM solutions by addressing unique challenges of large language models (LLMs) that differ from traditional ML deployments (for example, by implementing container-based deployment patterns that are optimized for memory requirements, GPU utilization, and token processing capacity, by following specialized model loading strategies). (AWS Documentation: Deploy Models with Amazon SageMaker Endpoints (GPU & Large Models), Amazon SageMaker Large Model Inference Deep Learning Containers, Amazon ECS GPU Support for Containerized Workloads)

- Skill 2.2.3: Develop optimized FM deployment approaches to balance performance and resource requirements for GenAI workloads (for example, by selecting appropriate models, by using smaller pre-trained models for specific tasks, by using API-based model cascading to perform routine queries).

Task 2.3: Design and implement enterprise integration architectures.

- Skill 2.3.1: Create enterprise connectivity solutions to seamlessly incorporate FM capabilities into existing enterprise environments (for example, by using API-based integrations with legacy systems, event-driven architectures to implement loose coupling, data synchronization patterns). (AWS Documentation: Amazon API Gateway Developer Guide, Amazon EventBridge – Event-Driven Architecture, AWS AppSync (API-based data integration patterns))

- Skill 2.3.2: Develop integrated AI capabilities to enhance existing applications with GenAI functionality (for example, by using API Gateway to implement microservice integrations, Lambda functions for webhook handlers, Amazon EventBridge to implement event-driven integrations).

- Skill 2.3.3: Create secure access frameworks to ensure appropriate security controls (for example, by using identity federation between FM services and enterprise systems, role-based access control for model and data access, least privilege API access to FMs). (AWS Documentation: AWS Identity and Access Management (IAM) – User Guide, IAM Roles and Temporary Credentials, AWS Security Best Practices – Least Privilege Access)

- Skill 2.3.4: Develop cross-environment AI solutions to ensure data compliance across jurisdictions while enabling FM access (for example, by using AWS Outposts for on-premises data integration, AWS Wavelength to perform edge deployments, secure routing between cloud and on-premises resources) (AWS Documentation: AWS Outposts – Hybrid Cloud Deployment. AWS Wavelength – Edge Computing for Low Latency Applications, AWS Direct Connect – Secure Hybrid Connectivity)

- Skill 2.3.5: Implement CI/CD pipelines and GenAI gateway architectures to implement secure and compliant consumption patterns in enterprise environments (for example, by using AWS CodePipeline, AWS CodeBuild, automated testing frameworks for continuous deployment and testing of GenAI components with security scans and rollback support, centralized abstraction layers, observability and control mechanisms). (AWS Documentation: AWS CodePipeline – CI/CD Service, AWS CodeBuild – Build and Test Automation, AWS CodeDeploy – Deployment and Rollback Strategies)

Task 2.4: Implement FM API integrations.

- Skill 2.4.1: Create flexible model interaction systems (for example, by using Amazon Bedrock APIs to manage synchronous requests from various compute environments, language-specific AWS SDKs and Amazon SQS for asynchronous processing, API Gateway to provide custom API clients with request validation). (AWS Documentation: AWS SDKs for Developers, Amazon SQS – Asynchronous Messaging, Amazon API Gateway – Request Validation)

- Skill 2.4.2: Develop real-time AI interaction systems to provide immediate feedback from FM (for example, by using Amazon Bedrock streaming APIs for incremental response delivery, WebSockets or server-sent events to generate text in real time, API Gateway to implement chunked transfer encoding). (AWS Documentation: Amazon API Gateway – WebSocket APIs)

- Skill 2.4.3: Create resilient FM systems to ensure reliable operations (for example, by using the AWS SDK for exponential backoff, API Gateway to manage rate limiting, fallback mechanisms for graceful degradation, AWS X-Ray to provide observability across service boundaries). (AWS Documentation: AWS SDK Retries and Exponential Backoff, Amazon API Gateway – Throttling and Rate Limiting, AWS X-Ray – Distributed Tracing)

- Skill 2.4.4: Develop intelligent model routing systems to optimize model selection (for example, by using application code to implement static routing configurations, Step Functions for dynamic content-based routing to specialized FMs, intelligent model routing based on metrics, API Gateway with request transformations for routing logic). (AWS Documentation: AWS Step Functions – Choice State (Conditional Routing), Amazon API Gateway – Request and Response Mapping (Transformations))

Task 2.5: Implement application integration patterns and development tools.

- Skill 2.5.1: Create FM API interfaces to address the specific requirements of GenAI workloads (for example, by using API Gateway to handle streaming responses, token limit management, retry strategies to handle model timeouts). (AWS Documentation: Amazon API Gateway – HTTP API and REST API Overview, Amazon API Gateway – Request Throttling and Quotas)

- Skill 2.5.2: Develop accessible AI interfaces to accelerate adoption and integration of FMs (for example, by using AWS Amplify to develop declarative UI components, OpenAPI specifications for API-first development approaches, Amazon Bedrock Prompt Flows for no-code workflow builders). (AWS Documentation: AWS Amplify Documentation, OpenAPI Specification (API-first Development on AWS API Gateway))

- Skill 2.5.3: Create business system enhancements (for example, by using Lambda functions to implement customer relationship management [CRM] enhancements, Step Functions to orchestrate document processing systems, Amazon Q Business data sources to provide internal knowledge tools, Amazon Bedrock Data Automation to manage automated data processing workflows). (AWS Documentation: AWS Lambda Developer Guide, AWS Step Functions – Workflow Orchestration, Amazon Q Business – Data Sources and Knowledge Integration)

- Skill 2.5.4: Enhance developer productivity to accelerate development workflows for GenAI applications (for example, by using Amazon Q Developer to generate and refactor code, code suggestions for API assistance, AI component testing, performance optimization). (AWS Documentation: Amazon Q Developer – Overview, Amazon Q Developer – Refactoring and Code Transformation)

- Skill 2.5.5: Develop advanced GenAI applications to implement sophisticated AI capabilities (for example, by using Strands Agents and AWS Agent Squad for AWS native orchestration, Step Functions to orchestrate agent design patterns, Amazon Bedrock to manage prompt chaining patterns). (AWS Documentation: Amazon Bedrock Agents, AWS Step Functions Developer Guide)

- Skill 2.5.6: Improve troubleshooting efficiency for FM applications (for example, by using CloudWatch Logs Insights to analyze prompts and responses, X-Ray to trace FM API calls, Amazon Q Developer to implement GenAI-specific error pattern recognition). (AWS Documentation: Amazon CloudWatch Logs Insights, AWS X-Ray – Tracing Applications, Amazon Q Developer – Troubleshooting and Code Assistance)

Domain 3: Understand AI Safety, Security, and Governance

Task 3.1: Implement input and output safety controls.

- Skill 3.1.1: Develop comprehensive content safety systems to protect against harmful user inputs to FMs (for example, by using Amazon Bedrock guardrails to filter content, Step Functions and Lambda functions to implement custom moderation workflows, real-time validation mechanisms). (AWS Documentation: Amazon Bedrock Guardrails, AWS Lambda Developer Guide, AWS Step Functions – Error Handling and Workflow Control)

- Skill 3.1.2: Create content safety frameworks to prevent harmful outputs (for example, by using Amazon Bedrock guardrails to filter responses, specialized FM evaluations for content moderation and toxicity detection, text-to-SQL transformations to ensure deterministic results).

- Skill 3.1.3: Develop accuracy verification systems to reduce hallucinations in FM responses (for example, by using Amazon Bedrock Knowledge Base to ground responses and perform fact-checking, confidence scoring and semantic similarity search for verification, JSON Schema to enforce structured outputs). (AWS Documentation: Amazon Bedrock Knowledge Bases, Amazon Bedrock Guardrails – Structured Output and Constraints)

- Skill 3.1.4: Create defense-in-depth safety systems to provide comprehensive protection against FM misuse (for example, by using Amazon Comprehend to develop pre-processing filters, Amazon Bedrock to implement model-based guardrails, Lambda functions to perform post-processing validation, API Gateway to implement API response filtering). (AWS Documentation: Amazon Comprehend – Content Analysis and Text Classification, Amazon Bedrock Guardrails, AWS Lambda Developer Guide, Amazon API Gateway – Request and Response Transformations)

- Skill 3.1.5: Implement advanced threat detection to protect against adversarial inputs and security vulnerabilities (for example, by using prompt injection and jailbreak detection mechanisms, input sanitization and content filters, safety classifiers, automated adversarial testing workflows). (AWS Documentation: Amazon Bedrock Guardrails – Safety Controls, AWS WAF – Web Application Firewall (Input Filtering & Protection), Amazon SageMaker Model Monitor – Detect Data and Model Drift)

Task 3.2: Implement data security and privacy controls.

- Skill 3.2.1: Develop protected AI environments to ensure comprehensive security for FM deployments (for example, by using VPC endpoints to isolate networks, IAM policies to enforce secure data access patterns, AWS Lake Formation to provide granular data access, CloudWatch to monitor data access). (AWS Documentation: Amazon VPC – VPC Endpoints (PrivateLink), AWS Identity and Access Management (IAM) – Access Policies, AWS Lake Formation – Data Lake Security and Permissions)

- Skill 3.2.2: Develop privacy-preserving systems to protect sensitive information during FM interactions (for example, by using Amazon Comprehend and Amazon Macie to detect personally identifiable information [PII], Amazon Bedrock native data privacy features, Amazon Bedrock guardrails to filter outputs, Amazon S3 Lifecycle configurations to implement data retention policies). (AWS Documentation: Amazon Comprehend – PII Detection, Amazon Macie – Data Security and PII Discovery, Amazon Bedrock Guardrails, Amazon S3 Lifecycle Management (Data Retention Policies))

- Skill 3.2.3: Create privacy-focused AI systems to protect user privacy while maintaining FM utility and effectiveness (for example, by using data masking techniques, Amazon Comprehend PII detection, anonymization strategies for sensitive information, Amazon Bedrock guardrails). (AWS Documentation: Amazon Comprehend – PII Detection, Amazon Bedrock Guardrails, AWS Prescriptive Guidance – Data Anonymization Techniques)

Task 3.3: Implement AI governance and compliance mechanisms.

- Skill 3.3.1: Develop compliance frameworks to ensure regulatory compliance for FM deployments (for example, by using SageMaker AI to develop programmatic model cards, AWS Glue to automatically track data lineage, metadata tagging for systematic data source attribution, CloudWatch Logs to collect comprehensive decision logs). (AWS Documentation: Amazon SageMaker Model Cards, AWS Glue – Data Lineage Tracking, Amazon CloudWatch Logs)

- Skill 3.3.2: Implement data source tracking to maintain traceability in GenAI applications (for example, by using AWS Glue Data Catalog to register data sources, metadata tagging for source attribution in FM-generated content, CloudTrail for audit logging). (AWS Documentation: AWS Glue Data Catalog, Amazon S3 Object Tagging and Metadata, AWS CloudTrail – Event Logging and Audit Trails)

- Skill 3.3.3: Create organizational governance systems to ensure consistent oversight of FM implementations (for example, by using comprehensive frameworks that align with organizational policies, regulatory requirements, and responsible AI principles). (AWS Documentation: AWS Well-Architected Framework – Governance Best Practices, Amazon Bedrock Guardrails – Responsible AI Controls, AWS Organizations – Policy-Based Management)

- Skill 3.3.4: Implement continuous monitoring and advanced governance controls to support safety audits and regulatory readiness (for example, by using automated detection for misuse, drift, and policy violations, bias drift monitoring, automated alerting and remediation workflows, token-level redaction, response logging, AI output policy filters). (AWS Documentation: Amazon Bedrock Guardrails – Monitoring and Safety Controls, Amazon SageMaker Model Monitor – Drift Detection, Amazon CloudWatch – Monitoring and Alarms)

Task 3.4: Implement responsible AI principles.

- Skill 3.4.1: Develop transparent AI systems in FM outputs (for example, by using reasoning displays to provide user-facing explanations, CloudWatch to collect confidence metrics and quantify uncertainty, evidence presentation for source attribution, Amazon Bedrock agent tracing to provide reasoning traces).

- Skill 3.4.2: Apply fairness evaluations to ensure unbiased FM outputs (for example, by using pre-defined fairness metrics in CloudWatch, Amazon Bedrock Prompt Management and Amazon Bedrock Prompt Flows to perform systematic A/B testing, Amazon Bedrock with LLM-as-a-judge solutions to perform automated model evaluations). (AWS Documentation: Amazon CloudWatch Metrics and Alarms)

- Skill 3.4.3: Develop policy-compliant AI systems to ensure adherence to responsible AI practices (for example, by using Amazon Bedrock guardrails based on policy requirements, model cards to document FM limitations, Lambda functions to perform automated compliance checks). (AWS Documentation: Amazon Bedrock Guardrails, Amazon SageMaker Model Cards, AWS Lambda Developer Guide)

Domain 4: Learn about Operational Efficiency and Optimization for GenAI Applications

Task 4.1: Implement cost optimization and resource efficiency strategies.

- Skill 4.1.1: Develop token efficiency systems to reduce FM costs while maintaining effectiveness (for example, by using token estimation and tracking, context window optimization, response size controls, prompt compression, context pruning, response limiting). (AWS Documentation: Amazon Bedrock Model Inference Controls (Response Length and Parameters), Amazon CloudWatch – Monitoring Usage and Metrics)

- Skill 4.1.2: Create cost-effective model selection frameworks (for example, by using cost-capability tradeoff evaluation, tiered FM usage based on query complexity, inference cost balancing against response quality, price-to-performance ratio measurement, efficient inference patterns). (AWS Documentation: Amazon Bedrock Pricing (Cost and Performance Considerations), Amazon SageMaker Inference Optimization Toolkit)

- Skill 4.1.3: Develop high-performance FM systems to maximize resource utilization and throughput for GenAI workloads (for example, by using batching strategies, capacity planning, utilization monitoring, auto-scaling configurations, provisioned throughput optimization).

- Skill 4.1.4: Create intelligent caching systems to reduce costs and improve response times by avoiding unnecessary FM invocations (for example, by using semantic caching, result fingerprinting, edge caching, deterministic request hashing, prompt caching). (AWS Documentation: Amazon Bedrock Prompt Caching (Reduce Repeated Inference Cost), Amazon ElastiCache – Semantic Caching for LLM Applications, Optimizing LLM Cost and Latency with Caching Strategies)

Task 4.2: Optimize application performance.

- Skill 4.2.1: Create responsive AI systems to address latency-cost tradeoffs and improve the user experience with FMs (for example, by using pre-computation to perform predictable queries, latency-optimized Amazon Bedrock models for time-sensitive applications, parallel requests for complex workflows, response streaming, performance benchmarking). (AWS Documentation: AWS Well-Architected Framework – Performance Efficiency Pillar)

- Skill 4.2.2: Enhance retrieval performance to improve the relevance and speed of retrieved information for FM context augmentation (for example, by using index optimization, query preprocessing, hybrid search implementation with custom scoring). (AWS Documentation: Amazon OpenSearch Service – Vector Search (k-NN), Amazon OpenSearch Service – Indexing and Performance Tuning, Amazon Bedrock Knowledge Bases – Retrieval and RAG Optimization)

- Skill 4.2.3: Implement FM throughput optimization to address the specific throughput challenges of GenAI workloads (for example, by using token processing optimization, batch inference strategies, concurrent model invocation management). (AWS Documentation: Amazon SageMaker Batch Transform (Batch Inference), Amazon CloudWatch – Metrics for Scaling and Throughput Monitoring)

- Skill 4.2.4: Enhance FM performance to achieve optimal results for specific GenAI use cases (for example, by using model-specific parameter configurations, A/B testing to evaluate improvements, appropriate temperature and top-k/top-p selection based on requirements).

- Skill 4.2.5: Create efficient resource allocation systems specifically for FM workloads (for example, by using capacity planning for token processing requirements, utilization monitoring for prompt and completion patterns, auto-scaling configurations that are optimized for GenAI traffic patterns). (AWS Documentation: Amazon SageMaker Inference Auto Scaling (Dynamic Resource Allocation), AWS Well-Architected Framework – Performance Efficiency Pillar (Workload Optimization & Monitoring))

- Skill 4.2.6: Optimize FM system performance for GenAI workflows (for example, by using API call profiling for prompt-completion patterns, vector database query optimization for retrieval augmentation, latency reduction techniques specific to LLM inference, efficient service communication patterns). (AWS Documentation: Amazon OpenSearch Service – Vector Search Performance Tuning, AWS X-Ray – Application Performance Profiling and Tracing)

Task 4.3: Implement monitoring systems for GenAI applications.

- Skill 4.3.1: Create holistic observability systems to provide complete visibility into FM application performance (for example, by using operational metrics, performance tracing, FM interaction tracing, business impact metrics with custom dashboards). (AWS Documentation: Amazon CloudWatch – Dashboards and Metrics, AWS X-Ray – Distributed Tracing, Amazon Bedrock Observability and Logging)

- Skill 4.3.2: Implement comprehensive GenAI monitoring systems to proactively identify issues and evaluate key performance indicators specific to FM implementations (for example, by using CloudWatch to track token usage; prompt effectiveness; hallucination rates; and response quality, anomaly detection for token burst patterns and response drift, Amazon Bedrock Model Invocation Logs to perform detailed request and response analysis, performance benchmarks, cost anomaly detection) (AWS Documentation: Amazon CloudWatch – Metrics and Logs, Amazon Bedrock – Model Invocation Logging and Monitoring, AWS Cost Anomaly Detection).

- Skill 4.3.3: Develop integrated observability solutions to provide actionable insights for FM applications (for example, by using operational metric dashboards, business impact visualizations, compliance monitoring, forensic traceability and audit logging, user interaction tracking, model behavior pattern tracking). (AWS Documentation: Amazon CloudWatch – Dashboards and Metrics, AWS X-Ray – Distributed Tracing and Service Maps, AWS CloudTrail – Audit Logging and Traceability)

- Skill 4.3.4: Create tool performance frameworks to ensure optimal tool operation and utilization for FMs (for example, by using call pattern tracking, performance metric collection, tool calling observability and multi-agent coordination tracking, usage baselines for anomaly detection).

- Skill 4.3.5: Create vector store operational management systems to ensure optimal vector store operation and reliability for FM augmentation (for example, by using performance monitoring for vector databases, automated index optimization routines, data quality validation processes). (AWS Documentation: Amazon OpenSearch Service – Monitoring and Performance Tuning, Amazon OpenSearch Service – Index Management and Optimization, AWS Glue Data Quality – Data Validation Frameworks)

- Skill 4.3.6: Develop FM-specific troubleshooting frameworks to identify unique GenAI failure modes that are not present in traditional ML systems (for example, by using golden datasets to detect hallucinations, output diffing techniques to conduct response consistency analysis, reasoning path tracing to identify logical errors, specialized observability pipelines).

Domain 5: Process of Testing, Validation, and Troubleshooting

Task 5.1: Implement evaluation systems for GenAI.

- Skill 5.1.1: Develop comprehensive assessment frameworks to evaluate the quality and effectiveness of FM outputs beyond traditional ML evaluation approaches (for example, by using metrics for relevance, factual accuracy, consistency, and fluency). (AWS Documentation: Amazon Bedrock Evaluations – LLM-as-a-Judge Framework for Accuracy & Quality Assessment, AWS Prescriptive Guidance – Evaluating Quality and Reliability of Generative AI Outputs)

- Skill 5.1.2: Create systematic model evaluation systems to identify optimal configurations (for example, by using Amazon Bedrock Model Evaluations, A/B testing and canary testing of FMs, multi-model evaluation, cost-performance analysis to measure token efficiency, latency-to-quality ratios, and business outcomes).

- Skill 5.1.3: Develop user-centered evaluation mechanisms to continuously improve FM performance based on user experience (for example, by using feedback interfaces, rating systems for model outputs, annotation workflows to assess response quality). (AWS Documentation: Amazon Augmented AI (A2I) – Human Review Workflows, AWS Amplify – Building Feedback-Driven Web Applications)

- Skill 5.1.4: Create systematic quality assurance processes to maintain consistent performance standards for FMs (for example, by using continuous evaluation workflows, regression testing for model outputs, automated quality gates for deployments).

- Skill 5.1.5: Develop comprehensive assessment systems to ensure thorough evaluation from multiple perspectives for FM outputs (for example, by using RAG evaluation, automated quality assessment with LLM-as-a-Judge techniques, human feedback collection interfaces).

- Skill 5.1.6: Implement retrieval quality testing to evaluate and optimize information retrieval components for FM augmentation (for example, by using relevance scoring, context matching verification, retrieval latency measurements).

- Skill 5.1.7: Develop agent performance frameworks to ensure that agents perform tasks correctly and efficiently (for example, by using task completion rate measurements, tool usage effectiveness evaluations, Amazon Bedrock Agent evaluations, reasoning quality assessment in multi-step workflows).

- Skill 5.1.8: Create comprehensive reporting systems to communicate performance metrics and insights effectively to stakeholders for FM implementations (for example, by using visualization tools, automated reporting mechanisms, model comparison visualizations).

- Skill 5.1.9: Create deployment validation systems to maintain reliability during FM updates (for example, by using synthetic user workflows, AI-specific output validation for hallucination rates and semantic drift, automated quality checks to ensure response consistency).

Task 5.2: Troubleshoot GenAI applications.

- Skill 5.2.1: Resolve content handling issues to ensure that necessary information is processed completely in FM interactions (for example, by using context window overflow diagnostics, dynamic chunking strategies, prompt design optimization, truncation-related error analysis).

- Skill 5.2.2: Diagnose and resolve FM integration issues to identify and fix API integration problems specific to GenAI services (for example, by using error logging, request validation, response analysis).

- Skill 5.2.3: Troubleshoot prompt engineering problems to improve FM response quality and consistency beyond basic prompt adjustments (for example, by using prompt testing frameworks, version comparison, systematic refinement).

- Skill 5.2.4: Troubleshoot retrieval system issues to identify and resolve problems that affect information retrieval effectiveness for FM augmentation (for example, by using model response relevance analysis, embedding quality diagnostics, drift monitoring, vectorization issue resolution, chunking and preprocessing remediation, vector search performance optimization).

- Skill 5.2.5: Troubleshoot prompt maintenance issues to continuously improve the performance of FM interactions (for example, by using template testing and CloudWatch Logs to diagnose prompt confusion, X-Ray to implement prompt observability pipelines, schema validation to detect format inconsistencies, systematic prompt refinement workflows).

AWS Certified Generative AI Developer Professional Exam FAQs

AWS Certification Exam Policy

Amazon Web Services (AWS) maintains a well-defined set of policies to ensure that its certification program remains fair, secure, and globally consistent. These guidelines govern everything from exam attempts and scoring methodologies to certification validity. Understanding these policies in advance allows candidates to approach the certification process with clarity and better planning.

– Retake and Eligibility Guidelines

If a candidate does not achieve a passing score, AWS requires a waiting period of 14 calendar days before the same exam can be attempted again. While there is no fixed limit on the number of retries, each attempt requires payment of the full exam fee. Once a candidate passes an exam, they are not permitted to retake that specific version for the next two years. However, if AWS introduces a new version of the exam with updated objectives and a different exam code, candidates are eligible to attempt the revised version.

– Scoring and Results

The AWS Certified Generative AI Developer – Professional (AIP-C01) exam follows a pass-or-fail evaluation model, based on standards set by AWS experts in alignment with industry best practices. Results are reported using a scaled scoring system ranging from 100 to 1,000, with 750 as the minimum passing mark. This scaled approach ensures fairness by normalizing scores across different versions of the exam that may vary slightly in difficulty.

– Performance Insights

In addition to the overall result, candidates may receive a breakdown of their performance across different exam domains. AWS uses a compensatory scoring model, meaning success is determined by the overall score rather than individual section performance. This allows candidates to offset weaker areas with stronger performance in others.

Each domain within the exam carries a different weight, which affects its contribution to the final score. The section-level feedback is intended to provide a general indication of strengths and areas for improvement, but it should be interpreted as directional guidance rather than an exact measure of proficiency.

AWS Certified Generative AI Developer Professional Exam Study Guide

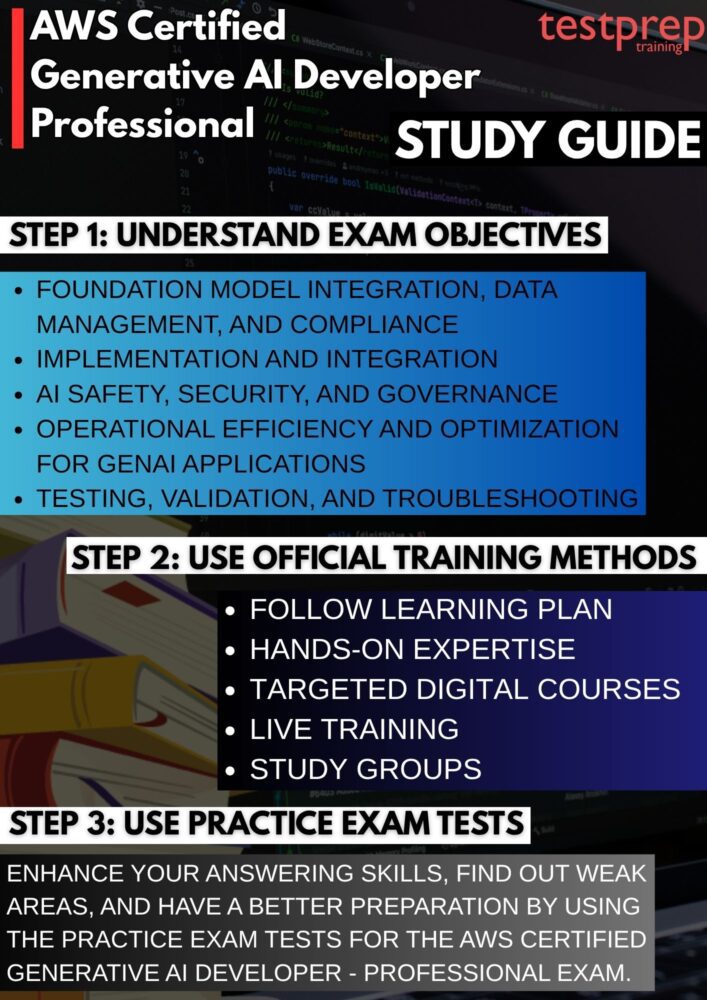

1. Master the Official Exam Guide and Domain Objectives

Your preparation should begin with a deep dive into the official exam guide, as it defines the exact scope of the certification. Go beyond simply reading the topics—analyze how each domain connects to real-world generative AI workflows. Pay close attention to areas such as foundation model integration, Retrieval-Augmented Generation (RAG), prompt engineering, agentic AI systems, and security practices. Break down each objective into subtopics and map them to practical implementations. This approach ensures you are not just aware of concepts but can apply them in production scenarios, which is critical for a professional-level exam.

2. Follow a Structured, Phased Learning Plan

Adopting a structured preparation framework helps you stay consistent and organized. A recommended approach is to divide your preparation into four phases: understanding the exam requirements, building foundational knowledge, gaining hands-on experience, and validating your readiness. In the initial phase, focus on clarity of concepts. In the second phase, deepen your understanding through documentation and guided learning. The third phase should emphasize real-world implementation, while the final phase should focus on revision and testing. This layered strategy ensures progressive learning without gaps.

3. Build Hands-On Expertise with AWS Learning Platforms

Practical experience is essential for this certification, as many exam questions are scenario-based. Use platforms like AWS Builder Labs, AWS Cloud Quest, and AWS Jam to simulate real-world environments. These tools allow you to work on tasks such as deploying AI models, integrating APIs, managing data pipelines, and optimizing performance. Hands-on practice helps you understand service interactions, architectural decisions, and troubleshooting techniques, which are often tested in the exam. The more scenarios you explore, the more confident you become in handling complex problem statements.

4. Strengthen Knowledge with Targeted Digital Courses

Identify gaps in your understanding and enroll in focused digital courses to address them. Instead of passively consuming content, actively engage with the course material by taking notes, revisiting challenging concepts, and implementing what you learn. Prioritize topics like prompt engineering strategies, vector databases, cost optimization techniques, and monitoring solutions. A targeted learning approach ensures efficient use of time and helps you build expertise in high-weightage domains.

5. Demonstrate Real Skills with Microcredentials

To stand out as a GenAI professional, it is important to validate your practical abilities. AWS microcredentials, particularly those focused on agentic AI and generative AI implementations, provide an opportunity to showcase your hands-on expertise. These credentials demonstrate that you can design, build, and deploy AI-driven solutions rather than just understand them theoretically. They also reinforce your preparation by exposing you to real implementation challenges aligned with industry expectations.

6. Leverage Live Training and Expert-Led Sessions

Participating in live training sessions and expert discussions can significantly enhance your preparation. These sessions often cover advanced topics, architectural patterns, and best practices that are directly relevant to the exam. They also provide insights into how AWS services are used in real production environments. Interactive formats allow you to clarify doubts instantly and gain practical tips that are not always available in documentation or recorded courses.

7. Engage with Study Groups and Professional Communities

Joining study groups or online communities can add a collaborative dimension to your preparation. Engaging with peers helps you explore different approaches to solving problems, discuss challenging scenarios, and stay motivated throughout your journey. Community discussions often highlight common pitfalls, exam strategies, and emerging trends in generative AI. Learning from others’ experiences can significantly improve your understanding and confidence.

8. Practice Extensively with Mock Exams and Performance Analysis

Practice tests are a critical component of your preparation. Attempt full-length mock exams under timed conditions to simulate the actual exam environment. Focus not only on accuracy but also on time management and decision-making. After each test, perform a detailed analysis of your performance. Identify weak areas, revisit concepts, and refine your approach to scenario-based questions. Consistent practice combined with thorough review ensures steady improvement and readiness for the final exam.