Microsoft Certified: Azure Databricks Data Engineer Associate (DP-750)

The DP-750: Implementing Data Engineering Solutions Using Azure Databricks certification exam is designed for professionals who want to validate their expertise in building and managing data engineering solutions using Azure Databricks. This credential focuses on practical, real-world capabilities, ensuring that candidates can design, implement, and maintain scalable data pipelines while adhering to modern data governance standards. Passing the exam will help candidates earn the title of Microsoft Certified: Azure Databricks Data Engineer Associate.

Role Expectations and Core Expertise

As a candidate for this certification, you are expected to demonstrate strong proficiency in data integration, transformation, and modeling. The role emphasizes the ability to design efficient data workflows, optimize processing pipelines, and ensure reliability across data engineering operations.

A key aspect of this role is maintaining high data quality and implementing governance practices using Unity Catalog. This includes managing access controls, ensuring compliance, and maintaining consistency across datasets within the Azure Databricks environment.

Technical Skills and Knowledge Areas

To succeed in the DP-750 exam, you should be comfortable working with both SQL and Python for data ingestion and transformation tasks. These skills are essential for building scalable and efficient data processing solutions.

In addition, familiarity with modern development practices is expected. This includes experience with the Software Development Lifecycle (SDLC) and version control systems such as Git, which are critical for collaboration and maintaining code quality in data engineering projects. You should also have working knowledge of key Azure services, including:

- Microsoft Entra for identity and access management

- Azure Data Factory for data orchestration

- Azure Monitor for tracking performance and troubleshooting

Key Responsibilities in the Role

Professionals preparing for this certification are typically involved in a range of data engineering activities within Azure Databricks environments. These responsibilities include:

- Environment Setup and Configuration

- Configuring Azure Databricks workspaces, clusters, and resources to support data processing workloads efficiently.

- Data Governance and Security

- Implementing security controls and managing data access using Unity Catalog to ensure compliance and proper governance.

- Data Preparation and Processing

- Designing and executing data transformation workflows that prepare raw data for analysis and downstream applications.

- Pipeline Deployment and Maintenance

- Building, deploying, and maintaining robust data pipelines while ensuring performance optimization and fault tolerance.

Collaboration and Work Environment

Data engineers working with Azure Databricks rarely operate in isolation. This role requires close collaboration with a variety of stakeholders, including administrators, platform architects, solution architects, data scientists, and data analysts.

Together, these teams contribute to designing, deploying, and securing end-to-end data solutions that align with organizational goals. Effective communication and coordination are essential to ensure that data systems are both scalable and reliable.

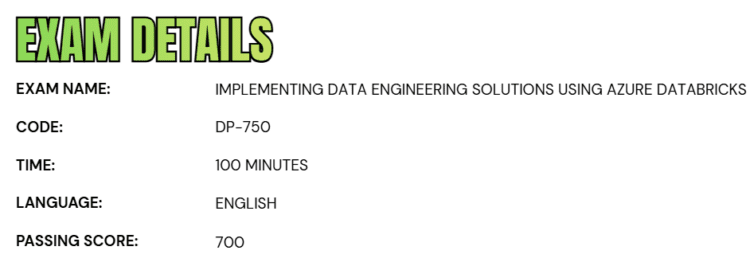

Exam Details

- The DP-750 exam, titled Implementing Data Engineering Solutions Using Azure Databricks, is an intermediate-level certification assessment designed for professionals working in data engineering roles. It evaluates a candidate’s ability to design, build, and manage data engineering solutions within the Azure Databricks environment.

- To successfully pass the exam, candidates must achieve a minimum score of 700 or higher.

- The total duration of the assessment is 100 minutes, during which candidates may encounter a combination of standard questions and interactive components that assess practical understanding.

- The DP-750 exam is conducted in a proctored environment, ensuring the integrity and credibility of the certification process. Candidates also have the option to familiarize themselves with the testing interface by exploring the official exam sandbox prior to attempting the actual exam.

- Currently, the DP-750 exam is available in English, and it is structured to align with real-world data engineering scenarios, making it a relevant and practical certification for aspiring and experienced data engineers.

Course Outline

Microsoft DP-750: Implementing Data Engineering Solutions Using Azure Databricks exam covers the following topics:

1. Understand setting up and configuring an Azure Databricks environment (15–20%)

Selecting and configuring compute in a workspace

- Choosing an appropriate compute type, including job compute, serverless, warehouse, classic compute, and shared compute

- Configuring compute performance settings, including CPU, node count, autoscaling, termination, node type, cluster size, and pooling

- Configuring compute feature settings, including Photon acceleration, Azure Databricks runtime/Spark version, and machine learning

- Installing libraries for a compute resource

- Configure access permissions to a compute resource

Creating and organizing objects in Unity Catalog

- Applying naming conventions based on requirements, including isolation, development environment, and external sharing

- Creating a catalog based on requirements

- Creating a schema based on requirements

- Creating volumes based on requirements

- Create tables, views, and materialized views

- Implementing a foreign catalog by configuring connections

- Implementing data definition language (DDL) operations on managed and external tables

- Configuring AI/BI Genie instructions for data discovery

2. Process of securing and governing Unity Catalog objects (15–20%)

Securing Unity Catalog objects

- Granting privileges to a principal (user, service principal, or group) for securable objects in Unity Catalog

- Implementing table- and column-level access control and row-level security

- Accessing Azure Key Vault secrets from within Azure Databricks

- Authenticating data access by using service principals

- Authenticating resource access by using managed identities

Governing Unity Catalog objects

- Creating, implementing, and preserving table and column definitions and descriptions for data discovery

- Configuring attribute-based access control (ABAC) by using tags and policies

- Configuring row filters and column masks

- Applying data retention policies

- Setting up and managing data lineage tracking by using Catalog Explorer, including owner, history, dependencies, and lineage

- Configuring audit logging

- Designing and implementing a secure strategy for Delta Sharing

3. Preparing and processing data (30–35%)

Designing and implementing data modeling in Unity Catalog

- Designing logic for data ingestion and data source configuration, including extraction type and file type

- Choosing an appropriate data ingestion tool, including Lakeflow Connect, notebooks, and Azure Data Factory

- Choosing a data loading method, including batch and streaming

- Choose a data table format, such as Parquet, Delta, CSV, JSON, or Iceberg

- Designing and implementing a data partitioning scheme

- Choosing a slowly changing dimension (SCD) type

- Choosing granularity on a column or table based on requirements

- Designing and implementing a temporal (history) table to record changes over time

- Designing and implementing a clustering strategy, including liquid clustering, Z-ordering, and deletion vectors

- Choosing between managed and unmanaged tables

Ingesting data into Unity Catalog

- Ingesting data by using Lakeflow Connect, including batch and streaming

- Ingest data by using notebooks, including batch and streaming

- Ingesting data by using SQL methods, including CREATE TABLE … AS (CTAS), CREATE OR REPLACE TABLE, and COPY INTO

- Ingesting data by using a change data capture (CDC) feed

- Ingest data by using Spark Structured Streaming

- Ingesting streaming data from Azure Event Hubs

- Ingesting data by using Lakeflow Spark Declarative Pipelines, including Auto Loader

Cleanse, transform, and load data into Unity Catalog

- Profile data to generate summary statistics and assess data distributions

- Choosing appropriate column data types

- Identifying and resolving duplicate, missing, and null values

- Transforming data, including filtering, grouping, and aggregating data

- Transforming data by using join, union, intersect, and except operators

- Transform data by denormalizing, pivoting, and unpivoting data

- Loading data by using merge, insert, and append operations

Implementing and managing data quality constraints in Unity Catalog

- Implementing validation checks, including nullability, data cardinality, and range checking

- Implement data type checks

- Implementing schema enforcement and manage schema drift

- Managing data quality with pipeline expectations in Lakeflow Spark Declarative Pipelines

4. Understand about deploying and maintaining data pipelines and workloads (30–35%)

Designing and implementing data pipelines

- Designing order of operations for a data pipeline

- Choosing between notebook and Lakeflow Spark Declarative Pipelines

- Designing task logic for Lakeflow Jobs

- Designing and implementing error handling in data pipelines, notebooks, and jobs

- Creating a data pipeline by using a notebook, including precedence constraints

- Creating a data pipeline by using Lakeflow Spark Declarative Pipelines

- Creating a job, including setup and configuration

- Configuring job triggers

- Scheduling a job

- Configuring alerts for a job

- Configuring automatic restarts for a job or a data pipeline

Implementing development lifecycle processes in Azure Databricks

- Applying version control best practices using Git

- Managing branching, pull requests, and conflict resolution

- Implementing a testing strategy, including unit tests, integration tests, end-to-end tests, and user acceptance testing (UAT)

- Configuring and packaging Databricks Asset Bundles

- Deploying a bundle by using the Azure Databricks command-line interface (CLI)

- Deploying a bundle by using REST APIs

Monitoring, troubleshooting, and optimizing workloads in Azure Databricks

- Monitoring and managing cluster consumption to optimize performance and cost

- Troubleshooting and repairing issues in Lakeflow Jobs, including repair, restart, stop, and run functions

- Troubleshooting and repairing issues in Apache Spark jobs and notebooks, including performance tuning, resolving resource bottlenecks, and cluster restart

- Investigating and resolving caching, skewing, spilling, and shuffle issues by using a Directed Acyclic Graph (DAG), the Spark UI, and query profile

- Optimizing Delta tables for performance and cost, including OPTIMIZE and VACUUM commands

- Implementing log streaming by using Log Analytics in Azure Monitor

- Configuring alerts by using Azure Monitor

Microsoft Certified: Azure Databricks Data Engineer Associate (DP-750) Exam FAQs

Microsoft Exam Policies

Microsoft maintains a well-defined framework of exam policies to ensure a fair, consistent, and reliable certification experience for all candidates. Understanding these policies—particularly those related to retake rules and scoring methodology—helps candidates plan their preparation effectively and approach the exam with clear expectations.

– Retake Policy

Microsoft’s retake policy is structured to encourage meaningful preparation between attempts rather than repeated immediate retries. If a candidate does not pass on the first attempt, they must wait at least 24 hours before scheduling a second attempt. For any additional attempts beyond the second, a mandatory waiting period of 14 days is required between each try.

Candidates are allowed up to five attempts within a 12-month period, starting from the date of their first exam attempt. If all five attempts are used without achieving a passing score, the candidate must wait 12 months from the initial attempt date before becoming eligible to take the exam again. Once a candidate passes the exam, retaking it is not permitted unless the certification expires. It is also important to note that each attempt, including retakes, requires payment of the exam fee.

– Scoring Methodology

Microsoft certification exams are evaluated using a scaled scoring system that ranges from 1 to 1,000, with 700 typically set as the passing threshold. This scoring approach is not a direct percentage calculation; instead, it reflects a candidate’s overall competence by considering factors such as the difficulty level of questions, variations in exam versions, and the range of skills being tested.

For Microsoft Office certification exams, the same scoring scale is used, although the required passing score may differ depending on the specific exam. This method ensures a balanced and standardized evaluation process, providing a more accurate representation of a candidate’s practical knowledge and technical proficiency across different exam formats.

Microsoft Certified: Azure Databricks Data Engineer Associate (DP-750) Exam Study Guide

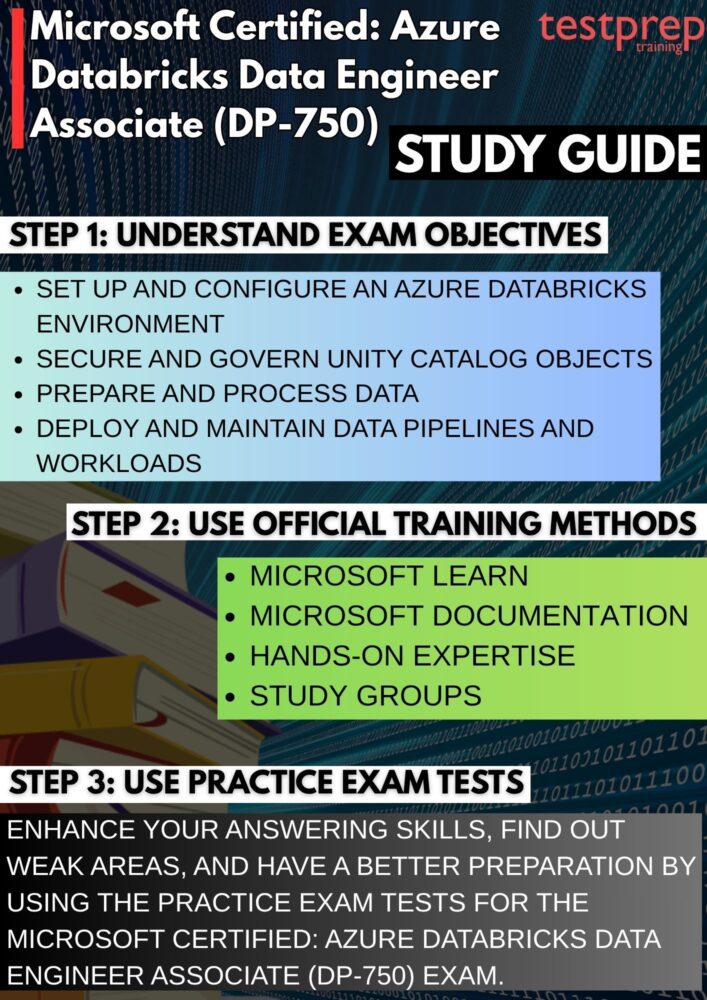

1. Thoroughly Understand the Exam Objectives and Skills Measured

Start by carefully analyzing the official exam guide, which outlines all the domains and subtopics covered in the DP-750 exam. Go beyond a simple review—break each domain into smaller concepts such as data ingestion, transformation, pipeline optimization, and governance with Unity Catalog.

Map these topics against your existing knowledge and categorize them into three groups: strong, moderate, and weak areas. This allows you to prioritize your study plan effectively. Pay close attention to weightage distribution across domains, as it helps you allocate study time proportionally. Understanding the scope of the DP-750 exam ensures that your preparation remains targeted and avoids unnecessary effort on irrelevant topics.

2. Build Conceptual Clarity with Microsoft Learn Learning Paths

Microsoft Learn offers structured and exam-aligned modules specifically designed for Azure Databricks and data engineering roles. These learning paths provide step-by-step guidance, starting from foundational concepts to more advanced implementations such as Delta Lake optimization and pipeline orchestration.

While going through these modules, focus on understanding why a particular approach is used, not just how. Complete all interactive exercises and knowledge checks, as they are designed to reinforce learning through practical scenarios. Make notes of important concepts, commands, and configurations, as these will be useful during revision. However, the training path for this exam includes the following course:

– Implement Data Engineering Solutions Using Azure Databricks

The DP-750T00-A course provides a concise, hands-on introduction to building end-to-end data engineering solutions using Azure Databricks. It covers environment setup, data ingestion, transformation, and deploying optimized pipelines, with a strong focus on governance and security using Unity Catalog. By the end, learners gain practical skills to implement and manage scalable, production-ready lakehouse solutions.

Further, this course is ideal for data engineers with basic knowledge of data analytics, cloud storage, and data organization. Candidates should be comfortable with SQL and Python, familiar with Azure Databricks and Unity Catalog, and have a foundational understanding of Azure security (including Microsoft Entra ID) and Git version control.

3. Strengthen Technical Depth Using Official Microsoft Documentation

After building a foundational understanding, transition to Microsoft’s official documentation for deeper technical insights. Documentation provides detailed explanations of features such as cluster configurations, job scheduling, performance tuning, and security implementation using Unity Catalog.

Use documentation to clarify advanced topics like partitioning strategies, caching mechanisms, and monitoring workloads. It is also helpful for understanding edge cases and limitations, which are often tested in scenario-based questions. Combining documentation study with hands-on practice ensures that you not only understand concepts but can also apply them effectively.

4. Develop Hands-On Expertise in Azure Databricks

Practical experience is a critical component of DP-750 preparation. Work directly in an Azure Databricks environment to gain familiarity with real-world workflows. Practice creating and managing clusters, writing and executing notebooks, and building end-to-end data pipelines.

Experiment with both SQL and Python to perform data ingestion and transformation tasks. Work with sample datasets to simulate real scenarios such as batch processing, streaming data, and incremental data loads. Additionally, practice implementing data governance using Unity Catalog, including managing permissions and securing data assets.

5. Engage with Study Groups and Professional Communities

Collaborating with others can significantly enhance your learning experience. Join study groups, technical forums, or professional communities where DP-750 candidates and Azure professionals share insights and discuss challenges.

Participating in discussions helps you gain alternative perspectives on problem-solving and exposes you to real-world use cases. It also allows you to stay updated on best practices and common pitfalls. Teaching or explaining concepts to others within these groups can further reinforce your own understanding.

6. Evaluate Your Readiness with Practice Tests and Mock Exams

Practice tests are essential for assessing your preparation level and identifying knowledge gaps. Attempt full-length mock exams under timed conditions to simulate the actual exam environment. This helps you build time management skills and reduces exam-day anxiety.

After each test, perform a detailed review of your performance. Focus not only on incorrect answers but also on questions you guessed correctly. Analyze why a particular answer is right or wrong and revisit the concepts if needed. Repeated practice improves both accuracy and confidence.

7. Continuous Revision and Targeted Improvement

As you progress, dedicate time to revisiting key topics and refining your understanding. Focus especially on weaker areas identified through practice tests and self-assessment. Use a combination of notes, documentation, and hands-on exercises to reinforce these concepts.

Create a revision plan for the final phase of your preparation, ensuring that all major domains are covered. Avoid learning entirely new topics at the last moment; instead, consolidate your existing knowledge and strengthen your problem-solving approach.

8. Simulate Real Exam Scenarios and Final Preparation

In the final stage, aim to replicate real exam conditions as closely as possible. Attempt multiple full-length practice exams for DP-750 in a distraction-free environment and adhere strictly to time limits. This will help you build endurance and maintain focus throughout the 100-minute exam duration.

Additionally, review important commands, configurations, and common troubleshooting scenarios. Ensure that you are comfortable interpreting scenario-based questions, as these form a significant portion of the exam.