As artificial intelligence advances beyond chatbots and static automation, organizations are entering a new phase where AI systems can plan, make decisions, and act with a degree of autonomy. Microsoft AB-100: Agentic AI Business Solutions Architect certification is a direct response to this shift. It is built for professionals who are expected not just to understand AI capabilities, but to design intelligent systems that operate within real business environments, align with enterprise goals, and remain governed, secure, and trustworthy.

Preparing for the AB-100 exam requires more than surface-level knowledge of AI tools or terminology. Candidates must develop a clear understanding of how agentic AI solutions are architected, evaluated, and justified from a business perspective. This guide is designed to help students understand the intent of the certification, its role, and the mindset required to succeed. Before diving into exam domains and preparation strategies, it is essential to understand the role of the certification and why it matters in today’s AI-driven organizations.

Positioning the AB-100 Certification in the Age of Agentic AI

The rapid evolution of artificial intelligence has moved enterprise systems beyond passive automation into a new phase defined by autonomy, reasoning, and coordinated action. This shift—commonly described as agentic AI—is reshaping how organizations design solutions, make decisions, and scale intelligence across business operations. Microsoft’s AB-100: Agentic AI Business Solutions Architect certification emerges directly from this transformation, reflecting a growing demand for professionals who can architect AI systems that act with intent, context, and accountability.

Agentic AI as a Business Architecture Discipline

Agentic AI is not simply an extension of generative AI capabilities. It represents a fundamentally different architectural approach where intelligent agents are designed to plan tasks, invoke tools, collaborate with other agents, and operate within defined governance boundaries. In enterprise environments, these systems are expected to support complex workflows, adapt to changing inputs, and deliver outcomes that align with strategic objectives rather than isolated technical outputs.

The AB-100 certification acknowledges this architectural shift. It frames agentic AI as a business-enabling capability, not a standalone technology. Candidates are evaluated on their ability to design systems where AI agents operate as part of a broader solution ecosystem—integrated with data platforms, business applications, security controls, and human oversight mechanisms.

Why Microsoft Introduced AB-100 Now?

As organizations accelerate AI adoption, many struggle not with model availability but with solution coherence—how AI fits into existing processes, how decisions are governed, and how outcomes are measured. Microsoft designed AB-100 to address this gap. The certification focuses on professionals who can translate business intent into agentic AI architectures that are scalable, compliant, and operationally sustainable.

Rather than testing isolated product knowledge, AB-100 emphasizes architectural judgment. It validates whether a candidate can select appropriate agent patterns, determine orchestration strategies, design integration points, and anticipate operational risks. This makes the certification particularly relevant in environments where AI solutions must operate reliably across departments, geographies, and regulatory frameworks.

Role of Microsoft AB-100 Certification

The Agentic AI Business Solutions Architect role sits at the intersection of strategy, technology, and governance. Professionals in this role are expected to work closely with stakeholders to identify where autonomous AI can create value, while also ensuring that such systems remain transparent, secure, and aligned with organizational policies.

AB-100 candidates are typically responsible for shaping solution blueprints rather than implementing individual components. Their focus includes defining agent responsibilities, establishing decision boundaries, designing escalation paths, and ensuring that AI-driven actions remain auditable and explainable. The certification reflects this responsibility by prioritizing scenario-based evaluation over procedural tasks.

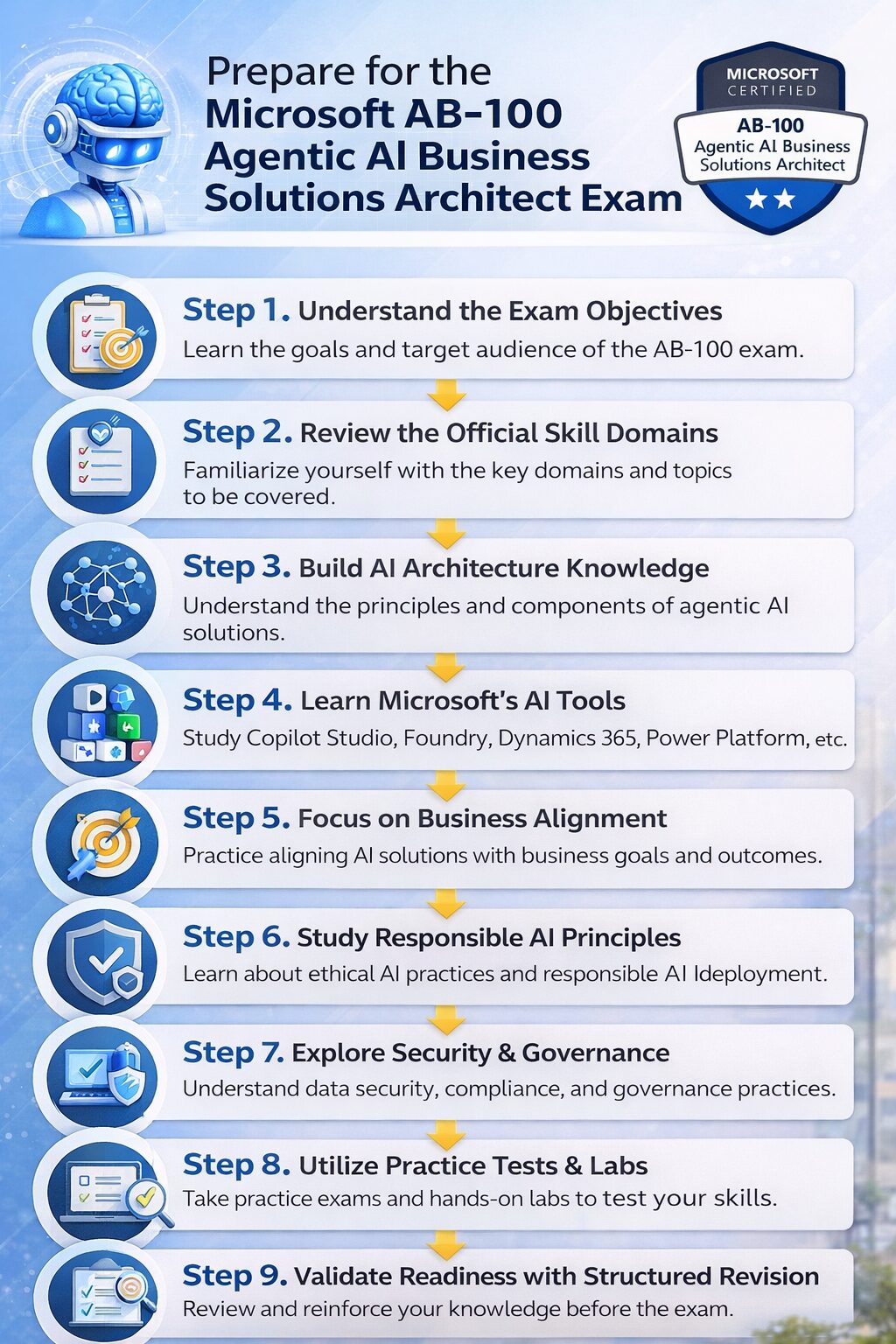

How to Prepare for the Microsoft AB-100 Exam: Study Guide

Preparing for AB-100 requires a mindset shift. Success is less about memorizing features and more about understanding why certain architectural choices make sense in specific business contexts. This guide is structured to help learners progressively build that perspective—starting with foundational concepts, moving through design reasoning, and culminating in scenario-driven decision-making.

Instead of presenting disconnected topics, the preparation journey emphasizes continuity: how business requirements influence agent design, how architecture choices affect governance, and how deployment considerations shape long-term solution viability. Each section is designed to reinforce architectural thinking, ensuring that learners are not just exam-ready, but role-ready.

Step 1: Understand the AB‑100 Details and Areas

The Microsoft AB‑100: Agentic AI Business Solutions Architect certification is designed for professionals who operate at the strategic intersection of AI, business architecture, and solution delivery. Unlike exams focused solely on technical implementation, AB‑100 evaluates whether a candidate can envision, design, and oversee AI-powered enterprise solutions that are secure, scalable, and aligned with organizational goals.

The Professional Role Behind AB‑100

The Agentic AI Business Solutions Architect is not a developer or system operator—they are enterprise architects for AI-first initiatives. Professionals in this role lead the transformation of business processes through AI by integrating multiple Microsoft technologies, including Dynamics 365, Power Platform, Copilot Studio, and Foundry Tools. They translate complex business needs into autonomous, agentic AI solutions that optimize decision-making, efficiency, and innovation.

In practice, this means understanding where AI can generate measurable business impact, how agents interact within systems, and how to ensure governance and compliance across autonomous workflows.

Core Competencies Assessed

AB‑100 validates a set of advanced competencies reflecting both technical understanding and strategic architectural judgment:

- Architectural Design: Ability to design agentic-first, multi-agent orchestrated solutions that are scalable, secure, and cross-platform.

- AI Integration Expertise: Knowledge of Microsoft AI applications, Foundry Tools, AI models, and multi-agent orchestration, including Agent2Agent (A2A) and Model Context Protocol (MCP) standards.

- Business Alignment: Skill in translating business objectives into measurable outcomes, applying ROI analysis, and ensuring solutions meet enterprise KPIs.

- Responsible AI Practices: Ability to embed ethical AI principles, compliance measures, and governance frameworks into solutions.

- Operational Leadership: Proficiency in monitoring agent performance, interpreting telemetry, securing data workflows, and guiding continuous improvement.

Key Responsibilities Reflected in the Exam

The exam mirrors real-world duties of an AI solution architect. Candidates must demonstrate the ability to:

- Define architecture strategies that integrate AI and autonomous agents into business solutions.

- Develop roadmaps for agentic-first business processes and AI adoption.

- Analyze and interpret complex business and technical requirements to design comprehensive solutions.

- Prototype AI components to showcase transformative capabilities.

- Guide end-to-end implementation while ensuring alignment with security, scalability, and enterprise goals.

- Promote AI adoption across business units and operational cycles, creating cohesive application lifecycle management (ALM) strategies.

Exam Intent and Structure

The AB‑100 exam is scenario-based and role-focused, reflecting authentic enterprise challenges. It is structured around three primary domains:

- Plan AI-Powered Business Solutions: Evaluate opportunities, assess feasibility, and determine strategic alignment with enterprise goals.

- Design AI-Powered Business Solutions: Architect multi-agent, cross-platform solutions that integrate Microsoft AI services and enforce responsible AI practices.

- Deploy AI-Powered Business Solutions: Oversee operational deployment, monitor agent performance, implement governance, and measure solution outcomes.

Candidates encounter realistic scenarios that require weighing trade-offs, selecting the optimal architectural path, and anticipating operational impacts. The exam duration is 100 minutes, featuring interactive components and a proctored environment, reflecting the complexity and applied nature of the role.

Preparing with the Role in Mind

Understanding the role, competencies, and exam intent is critical to developing an effective study strategy. AB‑100 preparation should focus on architectural reasoning, scenario analysis, and business alignment rather than memorizing product features. Candidates benefit from engaging with:

- Case studies simulating enterprise AI challenges

- Design pattern analysis and trade-off exercises

- Exercises articulating why certain architectural approaches align with business and governance objectives

Step 2: Breaking Down the Official AB‑100 Exam Skill Domains

To prepare effectively for the Microsoft AB‑100: Agentic AI Business Solutions Architect certification, it is essential to understand what the exam evaluates and how it aligns with real-world architectural responsibilities. The exam is organized around three interconnected skill domains, each representing a distinct phase of the AI solution lifecycle—from understanding business strategy to operational deployment. Grasping these domains helps learners approach their preparation strategically rather than relying on memorization of features or technical checklists.

Exam Domains and Their Emphasis

The AB‑100 exam assesses proficiency across three primary domains:

- Planning AI-Powered Business Solutions — roughly 25–30% of the exam

- Designing AI-Powered Business Solutions — roughly 25–30% of the exam

- Deploying AI-Powered Business Solutions — roughly 40–45% of the exam

This distribution reflects Microsoft’s emphasis on not only designing but also operationalizing and managing AI solutions in real enterprise environments. The heavier weighting on deployment highlights the importance of practical oversight, governance, and sustainability of AI-driven systems.

Domain 1: Planning AI-Powered Business Solutions

The first domain focuses on the architect’s ability to translate business needs into actionable AI strategies. It goes beyond identifying use cases; it requires analyzing the business context, evaluating organizational readiness, and defining measurable outcomes.

In this domain, candidates are expected to:

- Determine where agentic AI can provide tangible value across workflows and decision-making processes.

- Assess data readiness, including the completeness, accuracy, and accessibility of organizational data for training and decisioning.

- Define strategic approaches to integrating prebuilt or custom agents, while aligning with organizational priorities.

- Establish guidelines for knowledge sources, prompts, and operational constraints that will shape agent behavior.

- Evaluate the trade-offs of building custom models versus leveraging Microsoft’s prebuilt solutions, considering ROI and long-term maintainability.

Effectively, this domain tests strategic foresight and solution framing skills—ensuring the candidate can plan AI initiatives that are technically viable and business-relevant.

Domain 2: Designing AI-Powered Business Solutions

After planning, the design domain examines how to structure and orchestrate AI components to create robust and scalable solutions. Here, the focus shifts from conceptual strategy to solution architecture.

Candidates need to demonstrate:

- The ability to architect multi-agent systems using tools like Copilot Studio and Microsoft Foundry.

- Knowledge of agent orchestration patterns and protocols, such as Agent2Agent (A2A) and Model Context Protocol (MCP), to ensure seamless coordination.

- Integration strategies with business applications such as Dynamics 365, Power Platform, and Microsoft 365 services.

- Designing agent behaviors that automate workflows, reason over complex data, and respond adaptively to changing business conditions.

- Ensuring solution reliability, maintainability, and extensibility while maintaining alignment with enterprise standards.

This domain evaluates the candidate’s ability to turn strategy into a concrete architecture, balancing innovation with governance and operational reliability.

Domain 3: Deploying AI-Powered Business Solutions

Deployment is the largest portion of the exam and emphasizes bringing AI solutions into operational reality. It tests a candidate’s ability to oversee solution performance, governance, and continuous improvement after launch.

Key focus areas include:

- Monitoring agent operations and analyzing performance metrics for iterative optimization.

- Implementing testing, validation, and evaluation processes to ensure AI outputs meet business requirements.

- Establishing Application Lifecycle Management (ALM) processes covering data, models, agents, and integrations.

- Integrating governance, security, and responsible AI practices directly into deployed solutions.

- Managing compliance, access control, data protection, and auditability across autonomous agents.

This domain ensures that certified professionals can operationalize AI responsibly, maintaining both performance and compliance at enterprise scale.

How the Domains Reflect Real-World Responsibilities

The progression from planning, through design, to deployment mirrors the natural workflow of an enterprise AI architect. Candidates are expected to:

- Understand organizational strategy and constraints before committing to technical decisions.

- Translate business objectives into system-level architecture.

- Ensure deployed solutions remain secure, reliable, and aligned with governance and compliance standards.

By structuring the exam around these domains, Microsoft validates not only knowledge but also applied architectural reasoning and strategic decision-making skills essential for leading agentic AI initiatives.

Step 3: Build Strong Foundations in Agentic AI Concepts

To excel in the Microsoft AB‑100: Agentic AI Business Solutions Architect exam, candidates must first develop a solid conceptual foundation in agentic AI. This step emphasizes understanding not only the capabilities of AI technologies but also their application within enterprise systems, governance structures, and business strategies. Building this foundation ensures that learners can think critically about designing, integrating, and operationalizing AI solutions in ways that deliver measurable business impact.

Understanding Agentic AI in the Enterprise Context

Agentic AI goes beyond traditional automation or generative AI by creating systems capable of autonomous decision-making, collaboration across agents, and adaptation to evolving business needs. Architects are expected to design solutions where AI agents operate with intent, interact across multiple platforms, and remain accountable to governance and compliance frameworks.

Key conceptual areas include:

- Autonomous agent behavior: How agents make decisions and act independently while adhering to organizational policies.

- Multi-agent orchestration: Designing interactions between multiple AI agents to achieve coordinated outcomes.

- Integration with business applications: Embedding agents within Dynamics 365, Microsoft 365, and Power Platform to enhance workflows.

- Responsible AI principles: Ensuring ethical, secure, and compliant operation across AI-powered solutions.

Core Components and Technologies

A strong foundation also requires familiarity with Microsoft’s AI ecosystem and its supporting tools:

- Copilot Studio: Customizing AI agents, creating prompts, and designing agent workflows.

- Microsoft Foundry and Foundry Models: Developing intelligent, extensible AI components for enterprise applications.

- Dynamics 365 and Power Platform integration: Applying agentic AI to customer service, finance, supply chain, and operational processes.

- Open standards and protocols: Leveraging Agent2Agent (A2A) and Model Context Protocol (MCP) to ensure interoperability and consistency.

Understanding these tools helps candidates design solutions that leverage the full spectrum of Microsoft AI capabilities, enabling agents to perform complex tasks and interact seamlessly with existing systems.

Business Alignment and Outcome Measurement

Beyond technical knowledge, architects must understand how AI supports business objectives. This involves evaluating:

- Use cases for automation, analytics, and decision-making.

- Data readiness, quality, and grounding requirements.

- Return on investment (ROI) and total cost of ownership for AI solutions.

- When to extend existing agents versus building custom models.

Governance, Security, and Operational Reliability

Foundational knowledge must also cover operational and governance aspects, including:

- Securing AI models and data workflows, enforcing access controls, and safeguarding against misuse.

- Implementing monitoring and telemetry to optimize agent behavior.

- Applying Microsoft’s Responsible AI guidelines to maintain compliance and ethical standards.

- Designing audit trails and operational protocols to ensure accountability.

By mastering these concepts, candidates can design AI systems that are both innovative and trustworthy, which is critical for enterprise adoption.

Step 4: Master Microsoft’s Agentic AI Architecture Stack

A central aspect of preparing for the Microsoft AB‑100: Agentic AI Business Solutions Architect exam is developing a deep and practical understanding of the architectural stack that underpins agentic AI on the Microsoft platform. This step moves beyond conceptual knowledge into the realm of how intelligent systems are assembled, integrated, and governed using Microsoft technologies. Candidates must think in terms of architecture patterns, system interactions, and strategic design choices rather than individual features or isolated tools.

The agentic AI stack is not a monolith; it is a layered set of services, frameworks, and patterns that work in concert to deliver autonomous, scalable, and business‑aligned solutions. Mastery of this stack means knowing how to select the right components for a given scenario, how those components interoperate, and how to enforce governance and operational controls across the entire solution.

The Architecture Landscape: Beyond Individual Components

At its core, Microsoft’s agentic AI architecture is built to support solutions where multiple AI agents act autonomously yet cohesively within enterprise contexts. This includes both prebuilt capabilities, like Copilot experiences integrated into business applications, and custom‑built agents designed to address specific organizational needs.

Key architectural concerns include orchestration, integration, scalability, security, and governance. An architect must understand not only what each layer does, but how choices at one layer affect others—particularly how design decisions impact operational outcomes and business value delivery.

Copilot Studio and Custom Agent Development

One of the central pillars of this stack is Copilot Studio, Microsoft’s environment for designing and customizing AI agents. Rather than treating AI as a black box, Copilot Studio gives architects control over:

- The behavior of agents, by defining prompts, workflows, and response patterns;

- The knowledge sources agents reference, ensuring that actions and recommendations are grounded in verified organizational data;

- The integration points with business applications, such as Microsoft Dynamics 365 and Power Platform services.

Architects must understand how to tailor Copilot components to specific enterprise needs—balancing autonomy with compliance and relevance. This involves shaping agent responses, configuring tool access, and situating agents within broader system workflows.

Microsoft Foundry and Model Foundations

Beyond Copilot Studio lies Microsoft Foundry, a framework that supports building and operationalizing custom models and components. Foundry enables architects to assemble modular building blocks—such as data transformations, reasoning layers, and domain‑specific logic—into coherent AI systems.

Foundry models can be thought of as specialized components within the agentic architecture that extend beyond general‑purpose capabilities. When architecting solutions, professionals must decide when to leverage prebuilt intelligence versus when to extend functionality through Foundry components.

A mature architectural approach considers:

- The scope of customization required;

- The data governance policies that apply to custom models;

- The lifecycle implications of supporting custom versus prebuilt intelligence within production systems.

Understanding Foundry’s place in the stack helps candidates discern when and how to create bespoke AI components that serve enterprise needs without compromising operational stability.

Protocols and Orchestration Patterns

Modern agentic AI solutions rarely exist in isolation. Effective architectures incorporate patterns and standards that enable agents to collaborate, delegate tasks, and orchestrate complex flows across services.

Two emerging patterns relevant to AB‑100 are:

- Agent2Agent (A2A) interactions: Mechanisms that allow autonomous agents to communicate and coordinate work among themselves.

- Model Context Protocol (MCP): Standards for maintaining consistent contextual information across multiple agents and services.

Architects must understand how these patterns shape solution boundaries, enable modular design, and support reliable agent collaboration in multi‑agent scenarios. Application of such protocols ensures that agents do not act in silos, but contribute to unified business outcomes.

Integration with Enterprise Services

Agentic AI solutions rarely operate as standalone entities. They are embedded within an ecosystem of enterprise services including:

- Dynamics 365 applications for sales, service, finance, and operations;

- Power Platform components such as Power Apps, Power Automate, and Power BI;

- Microsoft 365 ecosystem elements for collaboration, knowledge, and workflow continuity.

A critical aspect of mastering the architecture stack involves understanding how AI agents interact with these services—both at the integration layer and in terms of end‑to‑end solution behavior. For instance, an AI agent may pull contextual data from a CRM record, automate a workflow in Power Automate, and surface insights via Microsoft Teams. Recognizing these interaction patterns allows architects to design solutions that are coherent, maintainable, and strategically aligned with business processes.

Security, Access, and Governance Considerations

Architectural mastery in agentic AI also requires a firm grip on security and governance. Unlike traditional applications, autonomous agents may make decisions that touch sensitive data, invoke actions, or influence business processes in real time. Architects must therefore embed governance into the architecture itself.

This includes:

- Defining access controls for agents and the resources they can access;

- Implementing auditing mechanisms that track agent activities;

- Enforcing policy guardrails, such as responsible AI principles and compliance requirements;

- Architecting telemetry and monitoring to observe system performance and detect anomalies.

Rather than treating governance as an add‑on, successful architects bake these concerns into the stack design, ensuring that the system is secure and resilient from the outset.

Data Foundations and Knowledge Sources

Finally, effective agentic AI architecture rests on a robust foundation of data readiness, including curated knowledge stores, structured and unstructured data sources, and governed access to that data. AI agents depend heavily on the quality, relevance, and timeliness of the data they reference.

Candidates must understand how to guide data preparation efforts such that knowledge sources:

- Support accurate reasoning and decisioning;

- Align with organizational metadata and taxonomy standards;

- Are secured and compliant with regulatory and privacy requirements.

In this way, data becomes not just a resource, but a strategic layer in the agentic architecture, shaping how solutions behave and deliver value.

Step 5: Focus on Business‑First Solution Design

When preparing for the Microsoft AB‑100: Agentic AI Business Solutions Architect exam, one of the most critical transitions is moving from technology awareness to strategic solution design. This step emphasizes how architects must orient their thinking around business value, stakeholder needs, and measurable outcomes—not technical capabilities in isolation. For agentic AI to be effective in real enterprises, it must serve clear business purposes, integrate into existing workflows, and support decisions in a way that maximizes organizational impact.

This section explores how to ground architectural decisions in business logic and how to design solutions that are defensible, scalable, and aligned with strategic priorities. The goal is to help learners internalize a design mindset where business objectives drive technical choices rather than the other way around.

The Essence of Business‑Driven Design

In enterprise practice, effective solution design begins with a deep understanding of the business context: the key goals the organization wants to achieve, the processes it wants to improve, and the value it expects from automation and intelligence.

This involves questioning assumptions such as:

- What specific business problem is being solved, and how will success be measured?

- Which stakeholders are affected, and what are their expectations?

- Where are the points of friction in existing workflows, and how can agentic AI reduce them?

Architects who design successful solutions do not start with tools; they start with outcomes. Tools and technologies are then selected because they support business logic, not because they are available or interesting.

For example, an agent designed to assist customer service should not be judged simply on its ability to generate responses. Its effectiveness is determined by improvements in customer satisfaction scores, reductions in handling time, and alignment with support policies—all of which are business‑oriented metrics.

Translating Business Requirements into Architectural Choices

A business‑first design approach requires translating high‑level objectives into concrete architecture decisions. This translation often involves reconciling multiple, sometimes competing, priorities—such as speed versus accuracy, cost versus capability, or autonomy versus control.

To do this effectively, an architect must:

- Decompose overarching business goals into architectural requirements that can be addressed with agentic AI patterns.

- Identify which processes are most amenable to intelligent automation and which are better suited for augmenting human decision‑making.

- Determine data prerequisites by analyzing the quality, structure, and trustworthiness of available information sources.

During this process, it is critical to explicitly articulate success criteria that tie back to business metrics. For instance, if the business goal is to improve lead conversion, the solution design must specify how agentic AI will interact with customer data, how it will prioritize leads, and how its recommendations will be measured against conversion outcomes.

Aligning with Organizational Strategy and Governance

Business‑first design also means aligning agentic AI solutions with broader organizational strategies and governance frameworks. In real enterprises, no technology can operate in a vacuum; it must conform to existing risk, compliance, and operational oversight structures.

This alignment manifests in several ways:

- Integrating responsible AI principles into design logic so that solutions respect ethical constraints and regulatory mandates.

- Ensuring that agent interactions and decision paths are auditable and interpretable by business stakeholders.

- Designing controls that allow for human intervention, escalation, and override when business policies demand it.

For exam preparation, it is important to recognize that Microsoft’s AB‑100 does not merely ask whether candidates can design an agent that performs a task. It evaluates whether the candidate can design a system whose autonomy is bounded by business policy, compliance standards, and measurable outcomes.

Balancing Standard Solutions and Custom Extensions

Another important dimension of business‑first design is deciding when to leverage prebuilt capabilities versus building custom agent behavior. Microsoft’s ecosystem offers a range of prebuilt AI services and Copilot experiences that accelerate solution delivery. However, not all business needs can be addressed through standard components.

An architect must weigh several business considerations:

- Is the business requirement unique enough to justify custom agent logic?

- Does the organization have the maturity and governance to support bespoke components?

- What are the trade‑offs between speed to market and long‑term maintainability?

Such decisions are seldom purely technical. They require understanding how an organization operates today, how it expects to grow, and how it will support the solution once deployed.

Embedding Measurement and Feedback Loops

A business‑oriented design does not end with deployment; it incorporates measurement and feedback loops that link solution performance back to business outcomes. Architects should anticipate how metrics will be tracked, how performance data will be interpreted, and how insights will influence iterative improvements.

For example, if an agentic AI solution is designed to optimize inventory planning, the architecture must include mechanisms to:

- Capture performance metrics such as forecast accuracy or stock‑out frequency.

- Integrate with existing reporting frameworks to surface business KPIs.

- Enable continuous training or adjustment of agent behavior based on real world feedback.

Understanding how measurement is embedded within architecture reinforces the idea that an AI solution is not static but evolves in tandem with business needs and system usage patterns.

Situating Design within Broader Enterprise Workflows

Finally, mastering business‑first design requires seeing agentic AI solutions within the larger context of enterprise workflows. Solutions should not create isolated pockets of automation; they should integrate with core business systems, enhance cross‑functional processes, and contribute to coherent digital transformation strategies.

This perspective helps candidates move beyond tactical implementations toward designs that are holistic, interconnected, and sustainable—traits that are central to success in the AB‑100 exam and in professional practice.

Step 6: Governance, Security, and Responsible AI Strategy

In enterprise environments, architecting agentic AI solutions requires more than technical proficiency and business alignment. As organizations increasingly deploy autonomous systems that act on behalf of users or business processes, there is a growing imperative to ensure that these systems operate securely, ethically, and in compliance with organizational standards and legal frameworks. The AB‑100 exam reflects this by placing significant emphasis on governance, security, and responsible AI principles as core elements of an architect’s role. Candidates must demonstrate an ability to build systems that are not just effective but trustworthy and resilient.

This step explores how governance frameworks, security considerations, and responsible AI practices are woven into the lifecycle of agentic AI architectures—ensuring that solutions perform reliably, respect regulatory constraints, and uphold ethical standards.

Embedding Governance Into AI Solution Frameworks

In the context of agentic AI, governance refers to the policies, processes, and oversight mechanisms that ensure autonomous systems align with organizational values and compliance requirements. Good governance is proactive: it anticipates potential risks, defines clear boundaries for autonomous behavior, and establishes accountability mechanisms.

A governance strategy must account for the following:

- Decision Boundaries: Defining the limits of agent autonomy so that actions taken by AI align with business policies and do not exceed acceptable operational thresholds.

- Escalation Processes: Designing mechanisms for human intervention where necessary, especially in scenarios involving sensitive data, high risk decisions, or ambiguous outcomes.

- Documentation and Policy Mapping: Ensuring that architectural designs and operational protocols are well documented and traceable to corporate policies and regulatory requirements.

Security as a Structural Pillar

Security in agentic AI solutions extends beyond traditional network and infrastructure protection. Because autonomous agents interact with multiple systems, data sources, and decision pipelines, architects must consider:

- Data Protection: Safeguarding sensitive information that agents may access or generate. This involves encryption, access controls, and ensuring that data is used and stored in compliance with privacy regulations.

- Identity and Access Management: Defining precise permissions for agents, users, and service components. Agents should only be authorized to perform actions essential to their role, minimizing exposure to misuse or unauthorized access.

- Monitoring and Threat Detection: Setting up logging and telemetry that can detect anomalous behavior—whether due to malicious intent, system errors, or unanticipated decision pathways.

Operationalizing Responsible AI Principles

Responsible AI is not an abstract ideal—it is a practical design requirement for any solution that includes autonomous decision makers. Within agentic AI systems, responsible AI practices are implemented through architectural and operational controls:

- Fairness and Bias Mitigation: Ensuring that agent decisions do not systematically disadvantage individuals or groups. This requires careful selection and review of training data, continuous evaluation of outputs, and mechanisms for correction.

- Transparency and Explainability: Designing agents so that their actions and reasoning can be understood by stakeholders. This may include logging decision rationale, exposing relevant model outputs, and integrating human‑readable explanations into system dashboards.

- Accountability: Assigning responsibility for system behavior. Even when agents operate autonomously, organizations must clearly define who is accountable for outcomes—whether positive or negative.

The AB‑100 exam evaluates whether candidates can integrate responsible AI practices into system design in a way that aligns with both business needs and ethical standards.

Compliance, Regulation, and Cross‑Functional Alignment

As agentic AI systems move into production, they often interact with regulated domains—financial reporting, healthcare records, personal data, and more. Architects must ensure that solutions adhere not only to internal governance but also to external regulatory requirements such as data privacy laws, industry standards, and audit mandates.

This involves working closely with compliance, legal, and risk teams to:

- Map regulatory obligations to architectural controls.

- Identify potential gaps in compliance coverage.

- Establish audit trails that support regulatory reporting and review.

Cross‑functional alignment is critical because architecture decisions have implications across organizational boundaries. The architect acts as a translator between business strategy, technology implementation, and compliance assurance.

Continuous Oversight and Lifecycle Governance

Agentic AI governance is not a one‑time checklist; it is a continuous process that spans the entire lifecycle of a solution. Once deployed, systems require ongoing monitoring, evaluation, and adjustment to maintain alignment with evolving business goals, regulatory updates, and operational realities.

Architects should design governance frameworks that include:

- Feedback loops for performance metrics, business impact, and ethical compliance.

- Periodic reviews of operational behavior to detect drift or unintended consequences.

- Mechanisms for iterative refinement of agents and control policies based on empirical data.

In the context of AB‑100 preparation, candidates should understand how governance structures are embedded into architecture patterns and how they support sustainable, responsible, and secure AI operations.

Integrating Governance, Security, and Ethics into Architecture

Ultimately, governance, security, and responsible AI are not add‑ons—they are integral architectural layers that intersect with planning, design, deployment, and operations. Designing with these considerations in mind ensures that autonomous systems are not only powerful but also trustworthy and aligned with enterprise expectations.

This focus transforms agentic AI solutions from experimental prototypes into enterprise‑grade systems capable of driving meaningful business outcomes without compromising organizational integrity or stakeholder trust.

Step 7: Prepare for Scenario‑Based and Architecture Questions

One of the defining characteristics of the Microsoft AB‑100: Agentic AI Business Solutions Architect exam is its emphasis on scenario‑based questions that require architectural reasoning rather than rote recall. Rather than asking candidates to list features or memorize service names, the exam presents realistic business challenges, layered with contextual constraints, and asks you to determine the most appropriate solution or design approach. This mirrors the responsibilities thousands of solution architects face in enterprise environments where decisions must balance business objectives, technical viability, governance requirements, and operational realities.

Successfully preparing for this type of assessment requires a shift in study mindset—from memorizing isolated facts to practicing architectural thinking and scenario interpretation. This section unpacks what scenario‑based questions look like, why they matter, and how to approach them strategically.

The Nature of Scenario‑Based Questions in AB‑100

In the AB‑100 exam, questions are framed around immersive business contexts. Rather than a single technical task, you are presented with a situation that includes business drivers, constraints, stakeholder needs, and often incomplete information. Your challenge is to decide how best to architect a solution that satisfies these multiple dimensions.

For example, a scenario might describe an organization struggling with inconsistent customer service experiences across regions. You might be asked to identify how agentic AI could unify workflows, integrate with existing CRM systems like Dynamics 365, enforce compliance standards, and measure success against business KPIs—all while managing cost and operational risk.

This type of question tests your ability to:

- Interpret business objectives and translate them into architectural drivers

- Evaluate multiple design alternatives and articulate why one is preferable

- Weigh trade‑offs such as complexity vs. maintainability, autonomy vs. control, or speed vs. scalability

The goal is not to find a “textbook answer” but to demonstrate sound architectural judgment in a context that reflects real enterprise needs.

Why Architectural Reasoning Matters?

Architectural reasoning is the capacity to make informed, defensible decisions when designing systems. In practice, architects rarely have perfect information. They must balance competing constraints—budget, governance, data readiness, security, and strategic alignment. AB‑100 mimics this environment, expecting candidates to integrate concepts across planning, design, deployment, and governance domains.

Consider the examination domains:

- Planning AI‑Powered Business Solutions requires you to establish what problem should be solved and why—defining success criteria and identifying risks before any design work begins.

- Designing AI‑Powered Business Solutions tests your ability to select appropriate agents, integration patterns, and orchestration strategies based on scenario context.

- Deploying AI‑Powered Business Solutions evaluates whether your design choices account for operational controls, monitoring, governance, and responsible AI practice.

Scenario questions may touch on any of these domains—or several at once—thereby reinforcing that AB‑100 evaluates holistic architectural competence, not isolated competencies.

Components of a Strong Response

Responding effectively to a scenario‑based question involves a combination of analytical rigor and clear reasoning. Here’s how to approach the architecture challenges tested in AB‑100:

- Accurate Interpretation of Requirements

- Begin by identifying the business intent behind the scenario. What are the primary outcomes the organization is trying to achieve? What constraints—technical, regulatory, or operational—are implicit? Good architectural reasoning starts with digging into these contextual signals.

- Alignment with Business Value

- Your response should connect architectural decisions to business impact. This means recognizing performance metrics, user experience expectations, ROI considerations, and long‑term sustainability when recommending components or patterns.

- Trade‑off Analysis

- In many scenarios, no single option is perfect. Strong candidates demonstrate balanced evaluation, acknowledging the merits and drawbacks of each architectural choice. For example, choosing a prebuilt Copilot agent may accelerate deployment but limit customization; opting for custom agent development increases flexibility but elevates governance overhead.

- Integration and Interoperability Considerations

- Real‑world solutions rarely operate in isolation. A strong answer weaves in how your proposed architecture integrates with existing systems, data stores, governance frameworks, and operational monitoring tools. This demonstrates an appreciation for end‑to‑end solution coherence.

Practicing Scenario Interpretation

Developing proficiency with scenario‑based questions requires shifts in study habits. Instead of memorizing platform details, adopt a practice regimen that includes:

- Reading enterprise case studies and mapping business challenges to architectural principles

- Decomposing example scenarios into business drivers, constraints, risks, and decision points

- Drafting architectural sketches that visualize how agents, integration layers, security controls, and governance mechanisms fit together

- Justifying design choices verbally or in writing, focusing on how they align with business and technical priorities

What to Expect in Exam Question Formats

While Microsoft does not publicly share the exact exam questions, candidates should be prepared for a variety of formats including:

- Multi‑part case scenarios where context evolves across sub‑questions

- Architecture choice comparisons, asking for the best fit among alternatives

- Constraint‑weighted decisions, where a particular requirement (e.g., security or compliance) must heavily influence the answer

- Interpretive questions that require synthesizing multiple inputs rather than recalling singular facts

Cultivating a Strategic Mindset

Preparing for scenario‑based architecture questions ultimately requires internalizing a mindset that transcends platform familiarity. Candidates must think like solution architects: absorbing business context, anticipating operational implications, balancing risks, and recommending solutions that are defensible, practical, and strategically aligned.

By engaging deeply with practice scenarios and reflecting on architectural decisions in light of business impact—as expected by the AB‑100 exam—you develop not only exam readiness but also professional maturity in designing AI‑enabled enterprise solutions.

Step 8: Use Microsoft‑Aligned Learning Resources Strategically

Effective preparation for the Microsoft AB‑100: Agentic AI Business Solutions Architect exam goes beyond accumulating facts; it requires targeted study anchored in official guidance and real‑world architectural patterns. Microsoft’s certification ecosystem is designed to support this depth of understanding by providing structured learning pathways, detailed documentation, and reference materials that mirror the competencies assessed in the exam. To maximize your readiness, it is important to use these resources not as checklists to memorize, but as frameworks to internalize architectural thinking and solution design principles relevant to agentic AI.

This section explores how to engage strategically with Microsoft‑aligned resources—transforming them from passive reading into active learning that builds your confidence and competence as an AI architect.

Understanding the Value of Official Documentation

Microsoft’s official certification pages and study guides serve as authoritative anchors for what the AB‑100 exam expects candidates to know. These resources outline the skill domains, define the scope of knowledge areas, and contextualize architectural responsibilities. However, their value lies not in rote consumption but in interpretation through the lens of architectural application.

For example, the AB‑100 study guide describes key domains such as planning, designing, and deploying AI solutions in business contexts. Rather than simply cataloging the topics, you should interpret these domains as architectural phases. Understanding how each phase contributes to the lifecycle of an AI solution will help you frame study activities that reflect real enterprise practice. By synthesizing the official documentation with practical architectural scenarios, you begin to see patterns and decision paths that are far more relevant to exam success than memorizing individual product names.

Integrating Learning Paths with Hands‑On Practice

Microsoft Learn offers structured learning paths that correspond to elements of the AB‑100 blueprint. These interactive modules are valuable because they pair conceptual content with environment sandboxes and practical exercises. However, to benefit most from them, align your engagement with these learning paths to scenario‑based questions and architectural reasoning:

- Treat each module as an opportunity to not just learn what a service does, but why and when it should be used in a business solution.

- As you complete exercises, reflect on how the exercise maps to real business requirements and what architectural decisions it implicitly represents.

- Use sandbox environments to replicate scenarios where you design, configure, or integrate agentic AI components using services like Copilot Studio and Microsoft Foundry.

This approach helps contextualize theoretical concepts within enterprise architectures, directly strengthening your capacity to answer scenario‑based exam questions.

Cross‑Referencing with Study Guides and Reference Architecture Examples

The official AB‑100 study guides articulate the domains and sub‑competencies tested on the exam. To deepen your understanding, avoid viewing these guides as static outlines. Instead, treat them as roadmaps for practice:

- Identify the architectural patterns described within each domain.

- Correlate these patterns with Microsoft’s reference architectures, whitepapers, and documentation examples.

- Analyze how these patterns respond to varying business contexts, data scenarios, or governance constraints.

For instance, if a study guide section discusses orchestration of autonomous agents, refer to Microsoft’s architecture examples on agent interactions and integration with business systems. Cross‑referencing in this way reinforces both conceptual grounding and practical comprehension.

Leveraging Community and Shared Expertise

While official resources form the backbone of your preparation, there is significant value in engaging with the broader Microsoft community—forums, GitHub repositories, official blogs, and technical discussions. Experienced architects often share insights about architectural trade‑offs, decision drivers, and real implementation challenges that illuminate nuances not found in product documentation.

Approach community resources strategically:

- Look for discussions that explore architectural reasoning rather than tool configuration.

- Seek out case studies and implementation narratives that mirror enterprise challenges.

- Compare multiple viewpoints to understand where architectural approaches converge or diverge.

Community insights should not replace official documentation, but they augment your perspective, helping bridge the gap between theoretical understanding and real practice.

Practicing with Mock Scenarios and Assessment Tools

In addition to official learning paths and documentation, many platforms provide practice assessments designed to simulate the style and depth of AB‑100 questions. These assessments can help you practice synthesizing architectural scenarios, evaluating trade‑offs, and articulating reasoned solutions.

When using these tools:

- Approach practice questions with the same rigor expected in the exam: read the context fully, identify underlying business drivers, and justify your architectural choices.

- Analyze explanations for both correct and incorrect responses to understand why a particular pattern is preferred in context.

- Use assessment feedback to identify areas where additional study or hands‑on practice is needed.

Mock scenarios help you internalize the architectural mindset tested in AB‑100 and reinforce disciplined thinking across multiple domains.

Creating a Personalized Study Framework

Finally, integrate all of these resources into a personal study framework that reflects your learning style and professional background. Doing so helps ensure that your preparation is focused on deep comprehension rather than surface‑level familiarity.

Consider organizing study around:

- Conceptual pillars: such as architectural design principles, governance models, and integration strategies.

- Scenario analysis practice: where real business cases are decomposed into architectural decisions and evaluated in context.

- Tool and service exploration: where hands‑on experimentation is used to validate conceptual choices and design patterns.

This multi‑layered approach reinforces the connections between what you learn and how you think as an architect, enabling you to engage more effectively with both the exam and real enterprise challenges.

Step 9: Validate Readiness with Structured Revision

Reaching an advanced level of understanding in the Microsoft AB‑100: Agentic AI Business Solutions Architect certification requires more than breadth of study; it demands disciplined and reflective revision. At this stage of your preparation, structured revision becomes a vital mechanism to confirm depth of comprehension, identify residual gaps, and transform accumulated knowledge into reliable architectural judgment. Rather than treating revision as repetitive memorization, this phase reframes review as an opportunity to integrate conceptual understanding, scenario interpretation skills, and practical patterns into a cohesive mental model that aligns with real enterprise expectations.

This step focuses on organizing your learning artifacts into a systematic revision cycle—one that mirrors the multi‑layered nature of architectural thinking central to the AB‑100 exam.

Begin with Conceptual Synthesis

The most effective revision begins with synthesis—assembling discrete pieces of knowledge into an interconnected framework. Given the complexity of the AB‑100 domains, it is helpful to revisit each domain holistically:

- Planning AI‑Powered Business Solutions: Reaffirm your approach to reading business requirements, assessing data readiness, and defining strategic intent. In revision, ask yourself how your architectural approach would shift if key variables—like stakeholder constraints or regulatory requirements—change.

- Designing AI‑Powered Business Solutions: Consolidate your understanding of architectural patterns, agent orchestration standards, and integration strategies. Re‑examine how specific design choices map to business outcomes and governance constraints.

- Deploying AI‑Powered Business Solutions: Focus revision on operationalization practices, governance embedding, and monitoring frameworks. Reflect on how governance, security, and responsible AI are woven into deployment patterns.

Reframe Notes into Narrative Understandings

As part of revision, transform your study notes from lists and bullet points into narrative frameworks that describe how concepts relate to one another in real scenarios. For example, rather than listing features of Copilot Studio or Microsoft Foundry, craft short summaries that explain:

- Why a particular architectural pattern matters in enterprise solution design.

- How specific Microsoft services contribute to agent integration.

- What governance or security considerations should influence architectural decisions.

This narrative approach forces you to articulate relationships and dependencies between concepts—an ability that is critical for interpreting scenario‑based questions on the AB‑100 exam. It also mirrors how seasoned architects communicate design rationale in professional environments.

Simulate Realistic Architectural Decisions

Validation is not complete without applied rehearsal. Structured revision should incorporate simulated decision‑making exercises that reflect the style and depth of AB‑100 scenarios. Create or source fictional case studies that require:

- Translating business objectives into architectural drivers.

- Comparing alternative solution paths and justifying your choice based on business impact, governance, and operational risk.

- Decomposing complex requirements into prioritized design elements.

While practice questions are useful, deeper value lies in simulated scenario narratives that demand strategic reasoning, trade‑off analysis, and contextual interpretation—not just knowledge recall. This prepares you to confront the kind of multi‑dimensional questions that the AB‑100 exam emphasizes.

Intensify Focus on Intersections Between Domains

One hallmark of the AB‑100 exam is that questions often span multiple domains simultaneously. During structured revision, deliberately design exercises that force you to integrate multiple facets of agentic AI architecture. For example:

- How do governance and security considerations alter your design of a multi‑agent orchestration solution?

- How might planning decisions around data readiness influence deployment monitoring frameworks?

By training yourself to think at these intersections, you develop the cognitive flexibility needed to address the holistic challenges presented in the exam.

Use Official Guides as Diagnostic Tools

Revisit the official AB‑100 study guide not as a checklist to be ticked off, but as a diagnostic instrument. For each skill objective outlined in the guide:

- Assess your level of confidence.

- Identify areas where your reasoning feels tentative or incomplete.

- Write short reflections on how you would architect solutions that satisfy the objectives in practical settings.

This practice converts the official skill statements into active revision prompts, anchoring your review to the precise competencies Microsoft expects.

Practice Articulating Architectural Rationale

Structured revision is also an opportunity to refine the way you express architectural reasoning. The AB‑100 exam prizes not just the end choice but the rationale behind it. To rehearse this skill:

- Compose short written explanations of why one architectural path is preferable to another.

- Record yourself explaining architectural decisions and listen for clarity and coherence.

- Engage in peer discussions or study groups where you must defend architectural choices.

These practices elevate your ability to articulate reasoning, a skill that separates proficient candidates from those who know concepts but struggle to apply them effectively.

Calibrate Against Performance Indicators

Finally, as part of structured revision, adopt a self‑assessment mindset grounded in observable performance indicators:

- Accuracy in scenario interpretation.

- Ability to justify architectural decisions with business and governance context.

- Consistency in mapping solution elements to strategic outcomes.

Use practice results, simulated scenario responses, and narrative explanations to calibrate your readiness. This calibration helps you manage your preparation time effectively and ensures that your revision bridges the gap between knowledge and architectural judgment.

Final Thoughts

Preparing for the Microsoft AB‑100: Agentic AI Business Solutions Architect exam is more than memorizing services or features—it is a comprehensive journey that develops strategic, business‑oriented, and technically grounded architectural thinking. Each step in this guide, from understanding the role and exam intent to structured revision, has been designed to cultivate a mindset that mirrors real enterprise practice: analyzing business objectives, designing intelligent solutions responsibly, balancing trade‑offs, and embedding governance and security at every layer.

Success in the AB‑100 exam comes from integrating knowledge, applying scenario‑based reasoning, and validating your decisions against business and ethical imperatives. By approaching your preparation with intentionality, leveraging Microsoft‑aligned resources strategically, and rehearsing architectural decisions through simulated scenarios, you not only enhance your exam readiness but also build practical skills that will serve in your professional journey as a solution architect in the evolving era of agentic AI. This guide provides a roadmap to mastery—enabling you to approach the exam with confidence, clarity, and a professional mindset that extends far beyond certification.